In the first of our five-part Tailings series, Janina Elliott, Global Central Technical Lead at Seequent, shares Seequent’s workflow solution that is helping safeguard operations with digital twin technology.

The workflow solution that is helping safeguard operations with digital twin technology.

Environmental, socio-economic and political risks along with a need to digitally transform the mining industry, has put Tailings Storage Facilities (TSFs) in the spotlight. To truly learn from a failure event and fulfil the goal of the global standard, complete transparency regarding a chain of events is essential.

In the first of our five-part Tailings series, Janina Elliott, Global Central Technical Lead at Seequent, shares Seequent’s workflow solution that is helping safeguard operations with digital twin technology.

In this session, Janina discusses the vast and complex challenges the industry is facing, what a dynamically updated digital twin is and its role in bridging the physical and digital world, the power of 3D visualization and modelling, and how to uncover valuable insights from data and share across teams.

Overview

Speakers

Janina Elliot

Global Central Technical Lead – Seequent

Duration

32 min

See more on demand videos

VideosFind out more about Seequent's mining solution

Learn moreVideo Transcript

[00:00:01.100]

<v Janina>Hello,</v>

[00:00:01.933]

and welcome to the first installment of our webinar series,

[00:00:05.927]

“Seequent’s Dynamic Digital Twin Solution

[00:00:09.170]

for Modern Tailings Storage Facilities Management.”

[00:00:12.710]

My name is Dr. Janina Elliot,

[00:00:14.540]

Seequent’s Global Central Technical Lead.

[00:00:16.820]

And today I will guide you through

[00:00:18.710]

the first portion of our proposed workflow,

[00:00:21.710]

from data acquisition to an integrated 3D model in Leapfrog.

[00:00:27.990]

Okay.

[00:00:28.823]

Before we begin, let’s do a little housekeeping.

[00:00:31.760]

At this time,

[00:00:32.593]

I would like to make a statement of confidentiality

[00:00:35.360]

and provide a disclaimer.

[00:00:37.250]

Please note that this presentation

[00:00:38.900]

is for informational purposes only,

[00:00:40.890]

and is not a commitment to deliver

[00:00:42.880]

software features or functionality.

[00:00:46.320]

The software products that will be shown today

[00:00:48.400]

are the latest versions of Leapfrog Geo and Central.

[00:00:51.840]

And despite the webinar’s technical connotation,

[00:00:54.630]

the presentation is designed for wide audience,

[00:00:57.650]

both from the technical and non-technical domain.

[00:01:01.820]

During the webinar, the audience is muted

[00:01:04.010]

to ensure that the presentation doesn’t run over time.

[00:01:07.230]

But should you have any questions,

[00:01:09.070]

please don’t hesitate to write it

[00:01:10.630]

into the question window in GoToMeeting.

[00:01:13.626]

We will make sure that a personalized reply

[00:01:15.600]

will be sent to you via email in due time.

[00:01:19.040]

After the webinar,

[00:01:20.080]

we would like to ask you to remain

[00:01:21.630]

for one or two minutes longer

[00:01:23.450]

to partake in a short survey

[00:01:25.310]

that will help us understand your needs

[00:01:27.000]

and learn how we can improve our offerings.

[00:01:29.840]

And as always, if you wish to maintain

[00:01:32.380]

or share a recording of this webinar,

[00:01:34.700]

a link to the video will be sent to you

[00:01:36.630]

shortly after the presentation.

[00:01:38.960]

Okay, so let’s get started.

[00:01:42.710]

Before we go ahead and jump into the demonstration,

[00:01:45.680]

I would like to introduce ourselves

[00:01:47.180]

to the viewers that haven’t met us yet.

[00:01:49.460]

So who is Seequent?

[00:01:51.530]

Some of you may have heard of us

[00:01:52.840]

through one of our subsurface modeling applications,

[00:01:55.490]

such as Oasis montaj, Leapfrog, or GeoStudio,

[00:01:59.090]

but others may never have had a touch point.

[00:02:01.900]

We are a software company that builds solutions

[00:02:04.350]

for the geoscientific community.

[00:02:06.660]

And it is our mission to enable our customers

[00:02:09.060]

to make better decisions about the earth,

[00:02:11.730]

environment, and energy challenges.

[00:02:14.750]

Because it is that robust decision-making process

[00:02:18.180]

to provide security and longevity to your organization.

[00:02:22.840]

Seequent has a truly global presence with over 430 staff.

[00:02:26.650]

But Not only do we hire geologists from the mining side,

[00:02:29.670]

we also bring in experts from other geoscientific fields

[00:02:32.640]

and provide active solutions

[00:02:34.210]

to the civil, energy, and environmental sectors.

[00:02:37.880]

And through this cross-sector engagement

[00:02:40.160]

with our global customers,

[00:02:41.920]

our team continuously learns and develops

[00:02:45.340]

new cutting edge technology

[00:02:47.400]

that aspires to support you in your decision-making.

[00:02:53.510]

Now, arguably one of the most important decisions

[00:02:56.330]

the global mining community recently made,

[00:02:58.990]

was to widely commit to an improved due diligence process

[00:03:02.780]

regarding the safe and sustainable design,

[00:03:05.200]

construction, maintenance, and remediation

[00:03:08.440]

of tailings storage facilities.

[00:03:11.320]

This commitment was formalized

[00:03:13.290]

in the Global Industry Standard On Tailings Management,

[00:03:16.430]

released in early August, 2020.

[00:03:18.650]

And since, operators and consultancies alike

[00:03:21.940]

have begun to investigate new strategies and technologies

[00:03:26.480]

that would allow them to fulfill

[00:03:27.930]

the ultimate goal of the standard,

[00:03:30.170]

to cause zero harm to people and the environment.

[00:03:33.950]

But to truly be able to affect change

[00:03:36.270]

and learn from failure events,

[00:03:38.600]

it is essential that there’s a complete transparency

[00:03:42.190]

regarding the chain of events.

[00:03:44.350]

All data, analysis, and decision-making processes

[00:03:48.370]

need to be clearly understood at any given time,

[00:03:51.310]

and ideally, in real-time.

[00:03:53.730]

However, to achieve this objective

[00:03:56.220]

is easier said than done.

[00:04:00.370]

Monitoring and understanding the ever-changing data

[00:04:03.870]

coming from some of the largest man-made structures on earth

[00:04:07.400]

is inherently challenging.

[00:04:09.610]

Our conversations with senior management highlights

[00:04:12.300]

that reports we see from a multitude of storage facilities

[00:04:15.810]

vary not only in location, age, and conditions,

[00:04:19.120]

but also in terms of the techniques applied

[00:04:21.540]

to monitor and test scenarios for potential failure.

[00:04:25.920]

This distinct lack of standardization makes the overall

[00:04:29.620]

monitor to model to design workflow

[00:04:32.420]

unnecessarily complex,

[00:04:34.470]

consumes resources and introduces risk.

[00:04:38.400]

In addition, supervisor and reviewers also fear

[00:04:41.490]

that the local technical teams struggle

[00:04:43.330]

not only with inconsistent file format and compatibility,

[00:04:47.300]

but the absence of multidisciplinary interaction

[00:04:50.430]

and sufficient comprehension,

[00:04:52.470]

that is a cause for miscommunication.

[00:04:55.400]

As such, both managers and technical teams agree

[00:04:58.910]

that they’re not fully confident

[00:05:00.480]

that a comprehensive picture of all the assets is delivered,

[00:05:03.940]

and that problems might be missed.

[00:05:09.100]

To create a truly comprehensive and live model

[00:05:12.500]

of a tailings storage facility,

[00:05:14.710]

all the groups involved need to work and communicate

[00:05:17.630]

effectively as a team,

[00:05:19.560]

and ensure consistent data transparency.

[00:05:23.310]

These underlying principles are what allows

[00:05:25.630]

for robust review and decision-making process

[00:05:28.930]

throughout the life cycle of a site.

[00:05:31.690]

But how is this best accomplished?

[00:05:33.800]

Firstly, all stakeholders in a project

[00:05:36.150]

whether modelers from different geoscientific groups,

[00:05:38.770]

project managers, or third parties,

[00:05:41.030]

such as consultants and JV partners,

[00:05:43.760]

need to have access to the latest data

[00:05:45.730]

in as near real-time as possible.

[00:05:48.560]

Secondly, everyone needs to work collaboratively

[00:05:52.030]

from a single source of truth

[00:05:54.250]

to create up-to-date models that facilitate

[00:05:56.870]

the development of a digital twin,

[00:05:59.640]

that is the complete conceptualization

[00:06:02.260]

of the physical system through a model,

[00:06:04.700]

numeric or otherwise.

[00:06:07.070]

Thirdly, to build the digital twin,

[00:06:09.720]

all modelers need to understand the data contextually.

[00:06:14.040]

Each geoscientific group views and utilizes

[00:06:16.870]

raw data differently.

[00:06:19.030]

And, you need to be able to trace data from the origin

[00:06:22.870]

throughout its transformation process.

[00:06:25.410]

And finally,

[00:06:26.710]

every stakeholder needs to have access to the team

[00:06:29.700]

and its collective intelligence,

[00:06:32.160]

that is to each other’s thought processes and expertise

[00:06:36.230]

to collaboratively analyze,

[00:06:38.390]

iteratively refine the digital twin,

[00:06:40.860]

and then take the next steps together

[00:06:43.280]

to arrive at robust target ranges

[00:06:45.550]

for the reviewing decision-maker.

[00:06:48.540]

When all of these conditions are met,

[00:06:50.790]

then tailings governance can shift

[00:06:52.810]

from a dominantly reactive, long-term modeling approach

[00:06:56.800]

to a more agile, even predictive, short-term one.

[00:07:03.280]

Now, Seequent has listened very closely,

[00:07:05.920]

and we have developed an integrated solution

[00:07:08.720]

in which Seequent modeling and data management products

[00:07:12.300]

are used in an intuitive workflow

[00:07:14.560]

to develop a digital twin.

[00:07:17.150]

The overall workflow begins by collecting

[00:07:19.470]

various types of geospatial data,

[00:07:22.330]

collected across the tailings side.

[00:07:24.580]

This may include drilling and GIS data,

[00:07:27.560]

but also geochemical and geophysical information,

[00:07:30.570]

whereby the latter can be fully analyzed

[00:07:32.670]

in Seequent’s Oasis montaj software.

[00:07:35.940]

All of these data points

[00:07:37.030]

are then introduced into the Leapfrog suite

[00:07:39.200]

to create an implicit geological model.

[00:07:42.790]

At the same time,

[00:07:43.860]

hydrogeological data from boreholes,

[00:07:46.060]

piezometric measuring stations, and more,

[00:07:48.460]

is collected to create a hydrogeological model,

[00:07:51.290]

either with the help of Leapfrog

[00:07:53.140]

or through our partner products,

[00:07:54.890]

such as FEFLOW and MODFLOW.

[00:07:58.000]

The 2D and 3D results

[00:07:59.900]

of the hydrogeological and geological models,

[00:08:02.900]

as well as engineering data

[00:08:04.410]

and material property information,

[00:08:06.720]

are then communicated and shared

[00:08:08.490]

with the geotechnical engineers.

[00:08:10.760]

The team is then able to carry out

[00:08:12.620]

long and short-term stability analysis through GeoStudio

[00:08:16.570]

to arrive at a geotechnical model.

[00:08:19.680]

In combination, all spatial and numeric models

[00:08:23.370]

provide the means to define a true digital twin

[00:08:26.860]

of the tailings environment.

[00:08:29.020]

Through Central, this collective information

[00:08:31.700]

can then be shared, version tracked,

[00:08:33.940]

and peer-reviewed by all the stakeholders

[00:08:36.900]

to define the next steps in the cycle,

[00:08:39.670]

and continuously update the digital twin

[00:08:41.800]

as the tailings site evolves.

[00:08:45.170]

In today’s webinar,

[00:08:46.630]

we will tackle the first portion of the workflow,

[00:08:50.300]

and show how raw field data can be effectively stored,

[00:08:53.950]

version tracked, and communicated through Central,

[00:08:56.760]

and actively integrated into a dynamic 3D model

[00:09:00.240]

in Leapfrog for subsequent analysis.

[00:09:03.330]

Okay, so let’s start by looking at Seequent Central.

[00:09:08.760]

Just in case you haven’t come across

[00:09:10.340]

Seequent Central as of yet,

[00:09:12.300]

Central is a cloud-based data and model management system

[00:09:15.690]

that provides a platform not only for the exchange

[00:09:18.810]

and retention of data,

[00:09:20.370]

but also cross-collaborative communication

[00:09:22.690]

in a 3D environment.

[00:09:24.760]

Central also facilitates the holistic integration

[00:09:27.430]

of data coming from a variety of software programs,

[00:09:30.300]

and as such, assists in a breakdown

[00:09:32.550]

of perceived technical and disciplinary barriers

[00:09:35.680]

between traditionally siloed groups.

[00:09:38.700]

And here we are in the Central portal,

[00:09:41.690]

the hub where all tailings facility stakeholders

[00:09:44.680]

can interactively communicate their work,

[00:09:47.380]

visualize Leapfrog models,

[00:09:48.920]

and build an auditable record

[00:09:51.230]

of project specific data and models.

[00:09:54.550]

As I mentioned earlier,

[00:09:55.740]

teamwork and data transparency are key factors,

[00:09:59.030]

and underpin the entirety of the indicated workflow.

[00:10:02.920]

As such, Central will resurface multiple times,

[00:10:06.370]

whenever major milestones are achieved

[00:10:08.300]

in the modeling process of the digital twin

[00:10:10.640]

that require preservation and communication.

[00:10:14.450]

For today’s workflow component,

[00:10:16.280]

we focus only on a subset of Central’s many features,

[00:10:19.910]

which we will explore in more detail

[00:10:21.720]

in the third installment of our webinar series.

[00:10:26.360]

We begin by having a closer look

[00:10:28.100]

at the data room environment of our demo project,

[00:10:31.410]

which resides in the files tab.

[00:10:36.860]

Each project stored in Central

[00:10:38.570]

is outfitted with a unique data room,

[00:10:40.930]

where you and all other project stakeholders

[00:10:42.980]

have the opportunity to store and version track

[00:10:46.490]

their project specific data,

[00:10:48.350]

no matter their software origin.

[00:10:50.550]

So once you’ve mapped out who provides essential data

[00:10:53.300]

on a regular basis and requires access,

[00:10:56.080]

you can create a custom folder structure.

[00:10:59.000]

Within each folder,

[00:11:00.140]

different files are chronologically organized,

[00:11:02.430]

which makes it easy to find the latest geotechnical report,

[00:11:05.860]

the latest lidar files and drone imagery,

[00:11:08.780]

or up-to-date water table point data, as shown here.

[00:11:12.950]

Should you utilize geophysics

[00:11:14.630]

for non-intrusive investigation of your TSF site,

[00:11:18.230]

then you’d be pleased to hear

[00:11:19.370]

that Oasis montaj-generated grids and voxels

[00:11:22.560]

can be directly linked to and stored within

[00:11:25.560]

the Central data room.

[00:11:27.330]

Another great advantage of the Central system

[00:11:30.180]

that goes beyond simple data retention,

[00:11:32.670]

is the integrated nature of our Seequent software.

[00:11:36.460]

As such, specific file types,

[00:11:38.520]

for example, aforementioned 2D grids,

[00:11:41.160]

point files, borehole tables,

[00:11:43.490]

polylines, planar structural data, and meshes,

[00:11:46.550]

can be dynamically linked to a live 3D Leapfrog model.

[00:11:51.100]

Once linked, the Central system notifies the modeler

[00:11:55.020]

that a project team member has introduced new data

[00:11:58.050]

that is ready for instant use,

[00:12:00.160]

thus aiding a real-time flow of information.

[00:12:03.430]

Now, let’s have a look at that data transfer in Leapfrog.

[00:12:09.790]

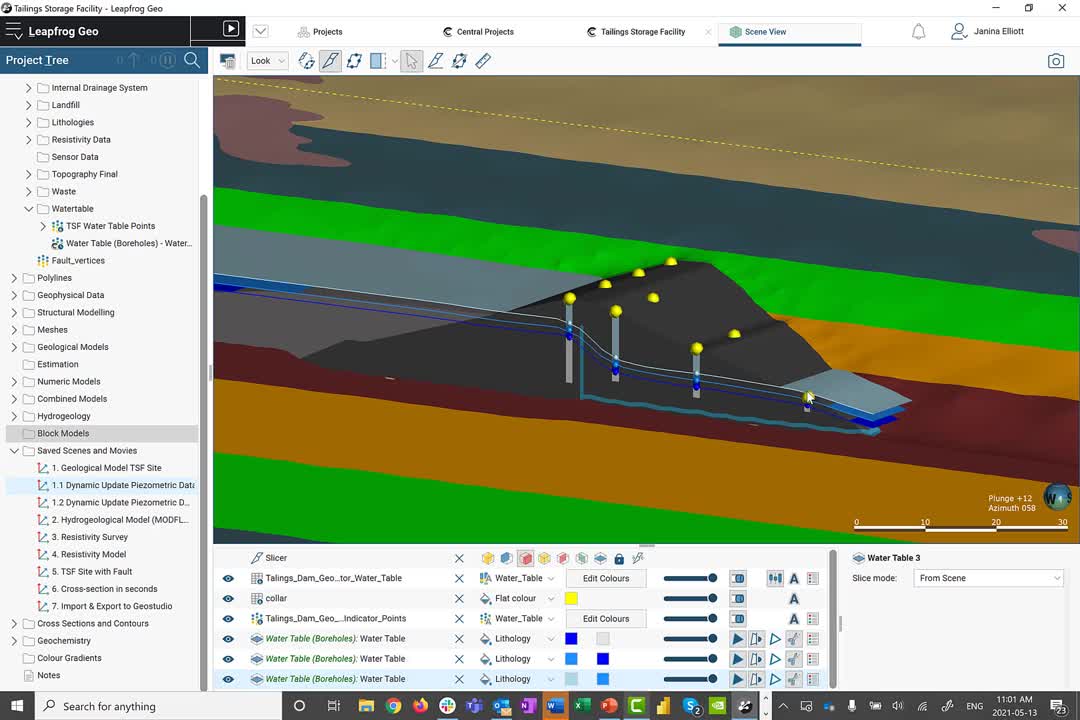

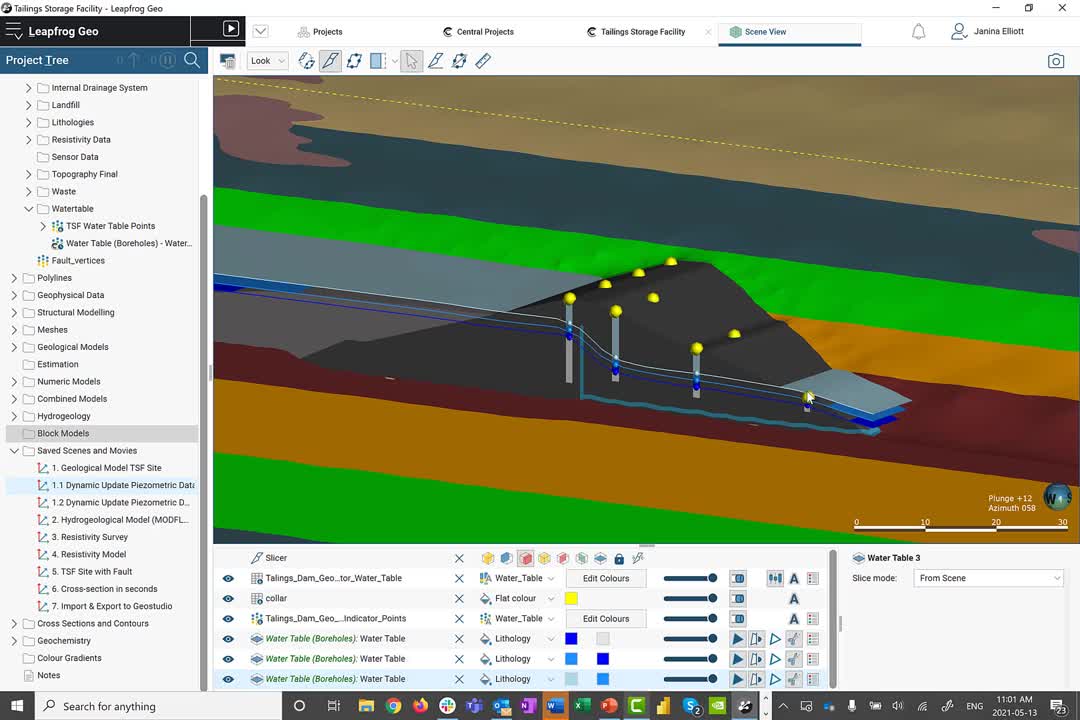

And here we are in the Leapfrog modeling suite,

[00:12:12.630]

where the dominant portion of the geospatial data

[00:12:15.340]

collected at site can be correlated and evaluated

[00:12:19.450]

in a comprehensive 3D model,

[00:12:22.160]

which represents a significant component

[00:12:24.310]

of the complete digital twin.

[00:12:26.980]

As you can see, Leapfrog is organized

[00:12:28.730]

in a very intuitive way.

[00:12:30.900]

On the left-hand side, we have the project tree,

[00:12:33.480]

which allows you to import geospatial field data

[00:12:36.550]

from multiple sources, including various databases,

[00:12:40.630]

and of course, Central.

[00:12:43.210]

The principle idea here is to start on the surface

[00:12:46.610]

and introduce topography meshes,

[00:12:49.180]

GIS data, and drone imagery,

[00:12:51.610]

that can be adjusted over time

[00:12:53.120]

to document the evolution of the project site.

[00:12:56.410]

Then you can step into the subsurface,

[00:12:58.730]

and introduce raw data relating to invasive

[00:13:02.190]

or non-invasive investigative methods.

[00:13:05.680]

These include borehole information,

[00:13:07.750]

designs relating to above or below-surface structures,

[00:13:11.590]

points relating to monitoring data

[00:13:13.670]

and other numeric measurements,

[00:13:16.050]

polylines, geophysical data, and even structural data.

[00:13:20.410]

As I mentioned earlier,

[00:13:21.700]

the dominant number of these data types

[00:13:23.950]

can be dynamically linked and sourced from Central.

[00:13:27.950]

Let me give you a quick example

[00:13:30.350]

here regarding the points folder.

[00:13:32.780]

To access data, all you need to do is to right click

[00:13:36.240]

and choose “Import Points from Central.”

[00:13:39.610]

This action will grant you access

[00:13:41.690]

to any Central project that you are privy to,

[00:13:44.880]

as well as the data collected in its modeling history,

[00:13:47.880]

and of course, its data room.

[00:13:50.780]

Once accessed, the link is established

[00:13:53.390]

and the point file is transferred.

[00:13:56.060]

As soon as the new version

[00:13:57.300]

has been made available in the data room,

[00:13:59.680]

for example, through another team member or consultant,

[00:14:02.760]

you’re automatically notified through a small clock symbol

[00:14:06.150]

right here in your Leapfrog project.

[00:14:08.760]

It is then up to you to decide

[00:14:10.630]

whether you want to go ahead

[00:14:12.050]

and use the new data right away,

[00:14:13.790]

to update your current model,

[00:14:15.620]

or build a second interpretation in parallel.

[00:14:19.620]

And how do we go about building a 3D model in Leapfrog?

[00:14:23.680]

The raw data collected in the folders

[00:14:25.570]

at the top of the project tree

[00:14:27.160]

can be actively linked

[00:14:28.780]

to the subsequent modeling folders below,

[00:14:31.270]

such as the geological models folder, estimation,

[00:14:34.450]

numeric interpolant models, and so on.

[00:14:37.530]

Here, the geospatial data is then evaluated

[00:14:41.530]

by a mathematical algorithm

[00:14:43.950]

that creates an implicit 3D model.

[00:14:49.680]

To visualize what that means,

[00:14:51.760]

let me give you an example utilizing water table information

[00:14:55.180]

collected in boreholes that vary over time.

[00:14:58.890]

The principle idea of an implicit 3D model

[00:15:01.720]

is that we take advantage of a mathematical algorithm

[00:15:05.070]

in order to ease our work.

[00:15:07.370]

The mathematical algorithm Leapfrog uses

[00:15:09.800]

is called the Fast Radial Basis Function,

[00:15:13.020]

which is akin to dual kriging.

[00:15:15.620]

What it does, is that it takes each individual XYZ point,

[00:15:19.970]

and statistically evaluates them against each other

[00:15:23.690]

to then create a best fit surface

[00:15:26.040]

that passes through each individual data point.

[00:15:29.290]

This surface then represents a water table.

[00:15:31.960]

Our geological contact,

[00:15:33.440]

our hypothetical domain boundary

[00:15:35.120]

representative of a specific value, et cetera.

[00:15:39.270]

So let’s have a closer look

[00:15:40.420]

at our active water table model here.

[00:15:43.580]

Each surface shown is organized

[00:15:45.900]

in a surface chronology folder,

[00:15:48.050]

which means that these surfaces are also placed

[00:15:50.490]

in a stratigraphic or chronological context with each other.

[00:15:55.110]

When I open up the surface,

[00:15:57.070]

we can see the overall dependencies

[00:15:59.720]

captured all the way to the hyperlinked source information.

[00:16:04.370]

Once the source information is updated,

[00:16:07.480]

the new data points will automatically flow

[00:16:10.320]

into the dynamically linked surface,

[00:16:12.820]

and the Fast Radial Basis Function will modify

[00:16:15.880]

the surface interpretation

[00:16:17.420]

to match the new statistics.

[00:16:20.190]

It is then up to the modeler

[00:16:21.860]

to apply their expert knowledge,

[00:16:23.940]

to identify if the surface needs to be altered manually

[00:16:27.660]

to match the natural environment.

[00:16:29.770]

For example,

[00:16:30.760]

in areas where data is scarce,

[00:16:32.880]

you can add explicit modeling methods

[00:16:35.290]

to augment the implicit model.

[00:16:37.610]

You can introduce polylines and additional data points

[00:16:41.030]

that support the building of a best fit surface environment

[00:16:44.200]

that takes all of the information into account.

[00:16:47.700]

Through this modeling method,

[00:16:49.470]

you have an opportunity

[00:16:50.690]

not only to arrive at a model very quickly,

[00:16:53.650]

but build a number of interpretations

[00:16:56.170]

based on the same data.

[00:16:58.360]

These interpretations can then be peer-reviewed,

[00:17:01.720]

and you can collaboratively arrive

[00:17:03.750]

at the best possible representation of the physical system

[00:17:07.710]

to support the development of the overall digital twin.

[00:17:16.860]

Once the geological interpretation is established,

[00:17:19.980]

you can go ahead and utilize the information

[00:17:22.600]

to create hydrogeological models within Leapfrog.

[00:17:26.400]

In a hydrogeological modeling folder,

[00:17:28.670]

you can build active grids that can then be introduced

[00:17:31.470]

into our partner products, such as MODFLOW and FEFLOW,

[00:17:35.310]

for subsequent flow modeling.

[00:17:37.490]

Now, what does this exactly look like?

[00:17:39.550]

Here’s an example of a finalized model.

[00:17:42.790]

To build a brand new model,

[00:17:44.550]

all we have to do is to right click

[00:17:46.340]

into the hydrogeological model folder,

[00:17:48.820]

choose MODLFOW for example,

[00:17:51.160]

and click on “New Structured Model.”

[00:17:54.070]

The principle idea is that you can pick

[00:17:56.140]

any previously created geological model

[00:17:59.330]

as a foundation for your hydrogeological grid structure.

[00:18:02.850]

Here, I’m going to go with my TSF model,

[00:18:06.400]

built in the geological modeling folder,

[00:18:08.910]

and introduce the specific lithological surfaces

[00:18:12.200]

that I deem appropriate

[00:18:13.700]

for this particular hydrogeological model.

[00:18:16.910]

Once in place,

[00:18:18.030]

I can also decide how to separate the individual layers,

[00:18:21.910]

and what kind of grid structure I wish to develop.

[00:18:25.390]

So for example,

[00:18:26.400]

what kind of grid size or cell spacing I wish to employ,

[00:18:29.760]

et cetera.

[00:18:31.340]

Now, in this particular case,

[00:18:33.220]

you have already prepared one of the models.

[00:18:36.170]

And what I’d like to show you,

[00:18:37.400]

is that in addition to defining your grid,

[00:18:40.160]

you also have the opportunity to set dry head value,

[00:18:43.000]

as well as edit the hydrogeological properties

[00:18:45.320]

of the model ahead of time.

[00:18:47.810]

Once ready,

[00:18:48.870]

export the information directly to MODFLOW,

[00:18:51.360]

or MODFLOW for GWV.

[00:18:59.154]

Now, this particular project

[00:19:00.850]

has been actively outfitted with geological,

[00:19:03.540]

hydrogeological, and geophysical data.

[00:19:06.810]

More specifically, resistivity information.

[00:19:10.200]

The resistivity data was calculated in Oasis montaj,

[00:19:13.450]

our geophysical modeling software,

[00:19:15.790]

and linked to Leapfrog through the geophysical data folder,

[00:19:19.240]

that can access information directly from Central.

[00:19:23.010]

In this case, the resistivity information relates

[00:19:25.910]

to multiple 2D sections within the TSF environment.

[00:19:31.090]

The point information displayed on these sections

[00:19:33.680]

can be actively interpolated into the 3D space,

[00:19:37.190]

through our numeric models folder.

[00:19:39.880]

Just to give you an idea of what that looks like,

[00:19:41.870]

I have prepared a numeric model ahead of time.

[00:19:45.320]

Similarly to what I explained earlier

[00:19:47.510]

regarding the water table surface development,

[00:19:50.540]

an interpolation model takes each individual XYZ point

[00:19:54.920]

into account.

[00:19:56.720]

Again, it utilizes the Fast Radial Basis Function then,

[00:20:00.370]

to statistically evaluate the volumetric distribution

[00:20:04.420]

of individual value ranges to create a 3D model

[00:20:08.570]

of the resistivity environment.

[00:20:11.070]

In addition, it allows for the manual adjustment

[00:20:14.430]

of statistical parameters

[00:20:16.430]

to modify the orientation of the established volumes

[00:20:20.080]

according to the observed trend.

[00:20:23.620]

The resulting resistivity model

[00:20:25.670]

shows us that there’s a distinct low resistivity pathway,

[00:20:29.710]

likely relating to a structural feature

[00:20:31.970]

within this environment.

[00:20:34.280]

Should you wish for more control

[00:20:36.150]

on the statistical distribution of, let’s say, contaminants,

[00:20:39.930]

or geochemical variables within the environment,

[00:20:43.170]

you can employ the estimation folder,

[00:20:45.550]

that is outfitted with an experimental variogram,

[00:20:48.500]

and a number of alternate mathematical algorithms

[00:20:51.640]

such as ordinary kriging, and more.

[00:20:57.980]

Now in this particular example,

[00:20:59.920]

the results of the geophysical analysis

[00:21:02.180]

have not yet been added to the geological interpretation,

[00:21:05.120]

and need to be introduced to show a more realistic model.

[00:21:09.340]

The advantage of Leapfrog is that you can go ahead

[00:21:12.180]

and dynamically evaluate information

[00:21:14.240]

from all sorts of different data sources

[00:21:16.830]

to truly build a coherent holistic model

[00:21:19.370]

within one package.

[00:21:21.690]

Now here’s the complete model of the TSF site,

[00:21:24.830]

including the interpreted fault.

[00:21:27.380]

Once the geological model has been updated,

[00:21:30.180]

that change needs to be actively communicated

[00:21:33.380]

to your colleagues,

[00:21:34.380]

and more specifically, the geotechnical engineers,

[00:21:37.520]

for subsequent stability analysis.

[00:21:40.540]

In order to do so,

[00:21:42.140]

I first need to create a set of sections

[00:21:44.360]

that represent the current geometry.

[00:21:47.200]

Luckily, building sections in Leapfrog is easy.

[00:21:50.510]

Here’s the basic layout of a cross section in Leapfrog.

[00:21:55.020]

And how did we arrive at this?

[00:21:56.750]

It’s fairly straightforward.

[00:21:58.630]

In the cross section folder,

[00:22:00.040]

you have to decide whether you wish to build

[00:22:02.110]

an individual section, a fence section, or a serial section.

[00:22:07.070]

For example, in case of a serial section,

[00:22:10.080]

you will notice here that the set that automatically appears

[00:22:13.500]

hinges itself in the 3D space on an existing section.

[00:22:18.020]

However, you can decide of course,

[00:22:19.690]

on a specific orientation of the particular center section,

[00:22:23.070]

as well as the relative spacing

[00:22:24.820]

regarding the remaining sections of the set.

[00:22:28.640]

Once the set is established,

[00:22:30.480]

you can then go ahead and define

[00:22:32.380]

just exactly what you wish to present on your section

[00:22:35.470]

through evaluations.

[00:22:37.820]

You can, for example,

[00:22:39.310]

evaluate any model that you’ve previously created,

[00:22:42.840]

which is then dynamically linked.

[00:22:45.110]

This means that any changes to the linked model

[00:22:47.730]

are automatically reflected here,

[00:22:50.110]

without having to go through another evaluation.

[00:22:53.770]

You can also go ahead and portray

[00:22:55.410]

any surface and line in your project

[00:22:57.820]

that matters to your geotechnical analysis.

[00:23:01.020]

In addition, if you build a new section,

[00:23:03.770]

you also have an opportunity to actively copy a layout

[00:23:07.440]

and direct it to another section.

[00:23:10.070]

Thus, you don’t have to reconstruct

[00:23:11.590]

the general distribution of your objects

[00:23:13.520]

every single time.

[00:23:14.830]

It’s done automatically.

[00:23:17.010]

This layout can be exported as a PDF,

[00:23:19.690]

scalable vector graphic,

[00:23:21.340]

or a GeoTIFF.

[00:23:23.325]

Or the entire set or individual section can be exported

[00:23:26.320]

in DXF format, AutoCAD file format,

[00:23:29.220]

and Bentley drawings.

[00:23:34.660]

Now that we’ve reached a milestone in our interpretation,

[00:23:38.380]

it is time to communicate the change to our team

[00:23:41.220]

and publish the updated model into Central.

[00:23:44.920]

That way the model can be accessed

[00:23:46.700]

by all stakeholders online,

[00:23:49.010]

reviewed in almost real-time,

[00:23:50.750]

and stored for audit purposes if required.

[00:23:54.450]

The publication process itself can actively take place

[00:23:57.680]

in the Leapfrog modeling suite.

[00:24:00.060]

All you need to do is, is to choose the modeling objects

[00:24:02.750]

you wish to visualize in the web portal, add the project,

[00:24:07.050]

and define a customizable project stage

[00:24:09.720]

to make it easy for your colleagues to understand

[00:24:12.100]

whether the current publication is of experimental nature

[00:24:15.540]

or needs to be approved through a peer-review process.

[00:24:19.860]

In addition,

[00:24:20.693]

you can then define a project branch

[00:24:22.610]

in our continued version tree.

[00:24:25.170]

The concept here is to differentiate

[00:24:27.060]

individual Leapfrog models

[00:24:28.530]

by content, site location, and modeling approach.

[00:24:33.080]

I will speak about the concept

[00:24:34.570]

of branching and its advantages

[00:24:36.600]

in more detail in a third part of our webinar series.

[00:24:41.020]

Once uploaded, all stakeholders associated with the project

[00:24:44.950]

are notified of the changes

[00:24:47.050]

through our online notification system in the web portal,

[00:24:50.246]

or via an email.

[00:24:52.230]

It is your choice to decide

[00:24:53.660]

how you wish to be alerted to the change.

[00:24:56.490]

The key is that you will hear

[00:24:57.740]

about the modifications right away,

[00:25:00.270]

to maximize the real-time integrated workflow approach.

[00:25:05.921]

Okay.

[00:25:07.220]

Let’s navigate back to the Central portal

[00:25:09.900]

where we can visualize the model we just uploaded.

[00:25:13.090]

Here on the history page of the Central project,

[00:25:15.780]

we can see the version tree and the latest upload.

[00:25:19.850]

Each individual version upload

[00:25:21.720]

is outfitted with a set of metadata,

[00:25:24.320]

including a succinct comment,

[00:25:26.460]

that explains just exactly what has changed.

[00:25:30.380]

This consistent record makes it easy to navigate

[00:25:33.350]

the evolution of the project,

[00:25:35.060]

even a year or two down the road,

[00:25:36.840]

and to understand just exactly

[00:25:38.760]

why certain decisions were made in the first place.

[00:25:42.330]

To review more specific detail,

[00:25:44.490]

we can click on a particular version,

[00:25:46.830]

and navigate to the right.

[00:25:48.580]

Here, I’d like to highlight the comment panel,

[00:25:51.630]

where the collective conversation and intellectual exchange

[00:25:55.680]

around this version upload is preserved.

[00:25:58.410]

The beauty here is that each comment

[00:26:00.490]

is outfitted with a thumbnail image

[00:26:02.840]

that when clicked allows the reader it to be placed

[00:26:06.080]

quite figuratively into the midst of the conversation.

[00:26:12.950]

And indeed, by clicking on the image in the comment,

[00:26:15.820]

we now find ourselves

[00:26:17.240]

in the web visualization service of Central,

[00:26:20.560]

and in the middle of the 3D model.

[00:26:23.950]

The geotag placed identifies the exact XYZ location

[00:26:28.670]

which requires discussion.

[00:26:30.440]

And makes it easy for all the stakeholders,

[00:26:32.830]

even the non-Leapfrog users,

[00:26:34.960]

to quickly visualize the issue at hand

[00:26:37.310]

and define the next steps together.

[00:26:40.270]

Placing a comment is easy, and once done,

[00:26:43.010]

all stakeholders noted with at-mentions,

[00:26:46.050]

or who have subscribed to Central’s project notifications

[00:26:50.490]

can start interacting in real-time.

[00:26:53.750]

Being able to actively partake in a conversation,

[00:26:57.050]

providing comments and simultaneously review

[00:27:00.250]

geological objects and more within the 3D environment,

[00:27:04.100]

allows for a true multidimensional peer-review process,

[00:27:08.250]

that adds to the security of your organization

[00:27:10.750]

by building a consistent audit trail.

[00:27:13.690]

In this case, the comment left here

[00:27:15.830]

is designed to inform the geotechnical engineers in my team

[00:27:19.620]

that the geological model has been altered,

[00:27:21.900]

and that a new set of sections is available

[00:27:24.110]

for subsequent stability analysis in GeoStudio.

[00:27:28.270]

Now they can access the 3D model in the web portal,

[00:27:31.090]

and investigate a newly altered geology,

[00:27:34.080]

yet again, without the need for a Leapfrog license,

[00:27:36.950]

and actively reply or create a new commentary within here.

[00:27:43.990]

To access the sections of interest,

[00:27:46.790]

the geotechnical team can now navigate

[00:27:48.950]

to the data room of the project,

[00:27:50.720]

and choose the exact file format required

[00:27:53.300]

for subsequent analysis.

[00:27:55.470]

Once finalized,

[00:27:56.690]

they can then return and communicate

[00:27:58.810]

the modified stability analysis

[00:28:00.710]

to the remaining team through Central.

[00:28:03.690]

While this process is vastly accelerated

[00:28:06.130]

through the improved real-time communication,

[00:28:09.050]

the download of the files remains classic at this moment.

[00:28:12.860]

Having said that, you, our global customers,

[00:28:15.880]

have asked for more seamless integration.

[00:28:18.370]

And we have listened.

[00:28:21.410]

Both Seequent’s July and November releases

[00:28:24.500]

are all under the mantle of 2D

[00:28:26.650]

and 3D integration with Central.

[00:28:29.430]

The next part of our webinar series,

[00:28:31.340]

that focuses on the continued development

[00:28:34.000]

of the digital twin within GeoStudio,

[00:28:36.980]

will demonstrate what functionality

[00:28:39.160]

is on the immediate roadmap

[00:28:40.790]

to create a dynamic link between Central and GeoStudio.

[00:28:45.480]

So in summary, when we assess what it takes

[00:28:49.010]

to manage tailings storage facilities safely,

[00:28:52.200]

and consider the requirements

[00:28:54.170]

of the Global Tailings Standard,

[00:28:56.410]

teams have to think about the holistic modeling approach.

[00:28:59.440]

That is, the digital twin.

[00:29:02.370]

The digital twin becomes the basis for designs,

[00:29:05.180]

used at all phases of the project’s life cycle.

[00:29:08.630]

It invites the engineers to participate

[00:29:11.050]

in the investigation of the physical system,

[00:29:13.720]

to understand the geological constraints,

[00:29:16.400]

and make informed decisions

[00:29:18.570]

about the facility’s performance as it evolves.

[00:29:22.390]

A comprehensive digital twin

[00:29:24.170]

that consistently incorporates changing data,

[00:29:26.970]

and evaluates all spatial, numeric,

[00:29:29.790]

and intellectual information

[00:29:31.860]

in a 3D plus temporal context,

[00:29:34.680]

helps to identify problems early.

[00:29:38.320]

It can also help design targeted monitoring programs.

[00:29:42.520]

Interpreting monitoring data is a significant challenge,

[00:29:45.980]

as it goes beyond plotting a time series

[00:29:48.430]

and trigger thresholds.

[00:29:50.350]

Again, data is only valuable

[00:29:52.700]

if it is interpreted in the context of the digital twin.

[00:29:57.060]

An continuously updated digital twin

[00:29:59.760]

enables an adaptable design

[00:30:02.040]

that allows changes to be identified in the moment,

[00:30:05.250]

to accept the current construction trajectory

[00:30:08.090]

that meets the factors of safety.

[00:30:12.770]

The benefits associated with the proposed

[00:30:15.080]

integrated workflow are as follows:

[00:30:18.040]

The combined use of the products

[00:30:20.040]

allows the team of geoscientists

[00:30:21.500]

to truly collaborate, break down barriers,

[00:30:23.920]

and make confident decisions together.

[00:30:26.770]

Particularly by being able to notify

[00:30:28.890]

all stakeholders in real-time,

[00:30:31.080]

and by providing direct access

[00:30:32.790]

to each other’s data when it’s needed,

[00:30:34.940]

the team can arrive at important decisions faster.

[00:30:38.270]

The proposed workflow also enhances the team’s efficiency

[00:30:41.460]

through the ability to track, understand,

[00:30:43.760]

and link peer-reviewed modeling changes.

[00:30:47.470]

The removal of redundancies

[00:30:49.160]

through a more standardized process

[00:30:51.400]

using intuitive technologies and practices

[00:30:54.680]

allows for greater team productivity.

[00:30:57.520]

Coherent data flow,

[00:30:58.710]

and transparency of the decision-making process,

[00:31:01.600]

provides the team with an opportunity to learn

[00:31:03.860]

what has changed, why it has changed over time,

[00:31:07.190]

and how to fix it.

[00:31:08.227]

In the short term, that’s reducing risk preemptively.

[00:31:12.370]

And lastly, being able to create

[00:31:14.540]

a coherent audit trail for internal and public review

[00:31:18.080]

provides security to the operator

[00:31:20.400]

and organization as a whole.

[00:31:24.500]

We at Seequent truly believe a paradigm shift is required,

[00:31:28.710]

whereby tailings governance needs to shift

[00:31:30.800]

from dominantly reactive, long-term modeling,

[00:31:34.540]

to a more strongly agile, even predictive,

[00:31:37.570]

short-term modeling approach.

[00:31:40.370]

To help prevent failure isn’t about one piece of data,

[00:31:43.470]

or a single piece of technology,

[00:31:45.490]

but it’s how you bring all of the pieces together

[00:31:48.030]

that counts.

[00:31:49.720]

Thank you for your time and attention.

[00:31:51.770]

We look forward to welcome you again in mid-June,

[00:31:54.410]

to the second part of our webinar series,

[00:31:56.767]

“From a 3D Leapfrog Model to a Comprehensive

[00:31:59.750]

Geotechnical Analysis in GeoStudio.”

[00:32:03.010]

In the meantime, please don’t hesitate to contact us

[00:32:05.530]

should you have any questions.

[00:32:06.800]

And if you have a few minutes to spare,

[00:32:09.290]

we would greatly appreciate for your participation

[00:32:11.990]

in a short survey after this webinar.

[00:32:15.260]

Thanks again, and have a great day.