ICMM Vendor Engagement for Tailings Storage Facilities Monitoring.

The industry has already come a long way to match the Global Tailings Standards and the ICMM in particular has greatly contributed to this development. Yet, as we all know, technological evolution never stops and indeed is speeding up. And so, while monitoring will continue to represent a corner stone to the understanding of Tailings Storage Facilities, there are elements of the overall analytical workflow that can be optimised to let the data speak more loudly and facilitate an agile decision-making process.

In this presentation we take a look at dynamic digital twin technology and review what a digital twin represents from a Seequent and Sensemetrics perspective and how a transparent and real-time flow of information can assist an iterative workflow approach.

Overview

Speakers

Iain McLean

Executive Vice President – North America Seequent

Janina Elliot

Global Central Technical Lead – Seequent

Alex Pienaar

Director of Mining – Sensemetrics

Duration

15 min

See more on demand videos

VideosFind out more about Seequent's mining solution

Learn moreVideo Transcript

[00:00:00.210]

<v Iain>The unique issues surrounding tailings dams</v>

[00:00:02.530]

require a combination of solutions

[00:00:04.800]

all of which are proven components.

[00:00:07.130]

We have a 20 year plus history in the industry

[00:00:09.480]

and have been an integral part

[00:00:10.530]

of decision-making throughout.

[00:00:12.940]

Now, we wanted to share a view with the ICMM

[00:00:16.290]

that our proactive approach to TSF management

[00:00:19.200]

can be achieved by bringing together

[00:00:21.380]

trusted monitoring technology

[00:00:23.900]

with geoscience modeling tools

[00:00:26.150]

to generate a dynamic digital twin,

[00:00:28.940]

to enable better planning and decision-making.

[00:00:32.449]

Now the original request for proposal,

[00:00:36.150]

one to end the intentions of the committee were

[00:00:38.580]

that they wanted to evaluate the current state of the art

[00:00:41.470]

in monitoring systems, including dashboards.

[00:00:44.550]

And we recognize that they provide a vital link

[00:00:46.870]

in the management of facilities,

[00:00:49.010]

but we wanted to highlight that they’re part of the solution

[00:00:52.520]

and we aim to propose to them

[00:00:54.291]

and to you hear that there is a more holistic view

[00:00:57.460]

to be had that can be a crucial tool

[00:01:00.170]

in securing the safety and social contract

[00:01:03.350]

of these tailing sites.

[00:01:06.760]

Janina please, if you could just go to the next slide,

[00:01:10.994]

the committee to respect the intentions

[00:01:13.313]

that are expressed in this statement.

[00:01:15.680]

And so now I’m going to introduce your Janina

[00:01:18.020]

who is going to run the agenda and set the context

[00:01:21.000]

for our approach.

[00:01:22.500]

Janina.

[00:01:23.360]

<v Janina>Thanks Iain.</v>

[00:01:25.300]

Okay, so let’s have a look at the agenda

[00:01:27.000]

for today’s presentation.

[00:01:28.470]

We will start by providing a brief overview

[00:01:31.010]

of your trusted partners in the industry

[00:01:33.550]

and the established technologies

[00:01:35.370]

that you rely upon on a daily basis.

[00:01:37.656]

Then we will establish what it is that we wish

[00:01:40.610]

to achieve as a community of partners,

[00:01:43.260]

particularly considering the goals

[00:01:45.410]

of the global tailings standard.

[00:01:47.400]

Now the industry has already come a long way

[00:01:50.080]

to match the standards

[00:01:51.280]

and the ICMM in particular has greatly contributed

[00:01:55.260]

to this development

[00:01:56.093]

yet, as we all know, technological evolution never stops

[00:02:00.000]

and indeed is speeding up.

[00:02:02.010]

And so while monitoring will continue

[00:02:04.540]

to represent our cornerstone to the understanding of TSF,

[00:02:08.960]

there are elements of the overall analytical workflow

[00:02:12.490]

that can be optimized to let the data speak more loudly

[00:02:16.360]

and facilitate an agile decision-making process.

[00:02:20.570]

As such, we will have a look

[00:02:21.790]

at the dynamic digital twin technology

[00:02:24.530]

and we’ll review what a digital twin represents

[00:02:27.410]

from a Sequent and as Sensemetrics perspective

[00:02:30.260]

and how we’re transparent and real-time flow

[00:02:32.610]

of information can assist an iterative workflow approach.

[00:02:36.620]

Now, many of you will be familiar with Sequent

[00:02:38.510]

as we have been an established partner

[00:02:40.960]

at the global mining houses for many decades,

[00:02:44.000]

but what may be new to you is that Sequent

[00:02:45.403]

as well as Sensemetrics

[00:02:47.300]

have recently joined forces

[00:02:48.589]

with Bentley, a company that aspires

[00:02:51.650]

to bring both the digital above

[00:02:53.540]

and below surface worlds together

[00:02:56.350]

to create true project insight

[00:02:58.180]

through digital twin technology.

[00:03:00.900]

As such, we’ve become one family risk Sensemetrics

[00:03:04.010]

and have now a unique opportunity

[00:03:06.360]

to leverage each other’s expertise

[00:03:08.330]

and vast industry experience to take a leap

[00:03:11.190]

into the future of TSF management.

[00:03:13.880]

Now, what is it that drives the industry today?

[00:03:16.900]

I think all of us know what the answer to this question is,

[00:03:19.500]

and I won’t elaborate on past challenges

[00:03:22.140]

and events we are all familiar with.

[00:03:24.850]

Instead I’d like to highlight

[00:03:26.310]

how far the industry has come since,

[00:03:28.820]

and the direction has taken to facilitate the new standard.

[00:03:33.010]

Arguably, the biggest commitment

[00:03:34.890]

is to a digital transformation

[00:03:37.320]

and creation of a comprehensive

[00:03:39.440]

and accessible knowledge base

[00:03:41.273]

that provides complete transparency regarding the management

[00:03:44.880]

of TSF and the chain of events in case of failure.

[00:03:48.930]

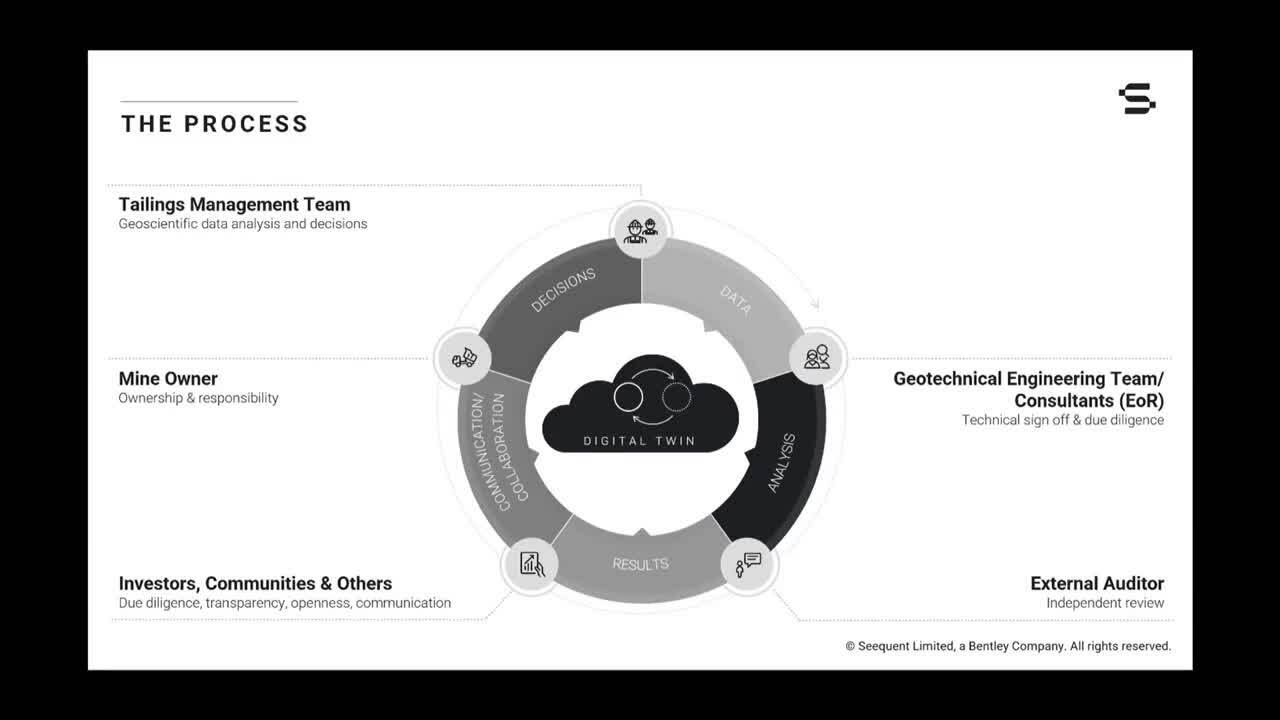

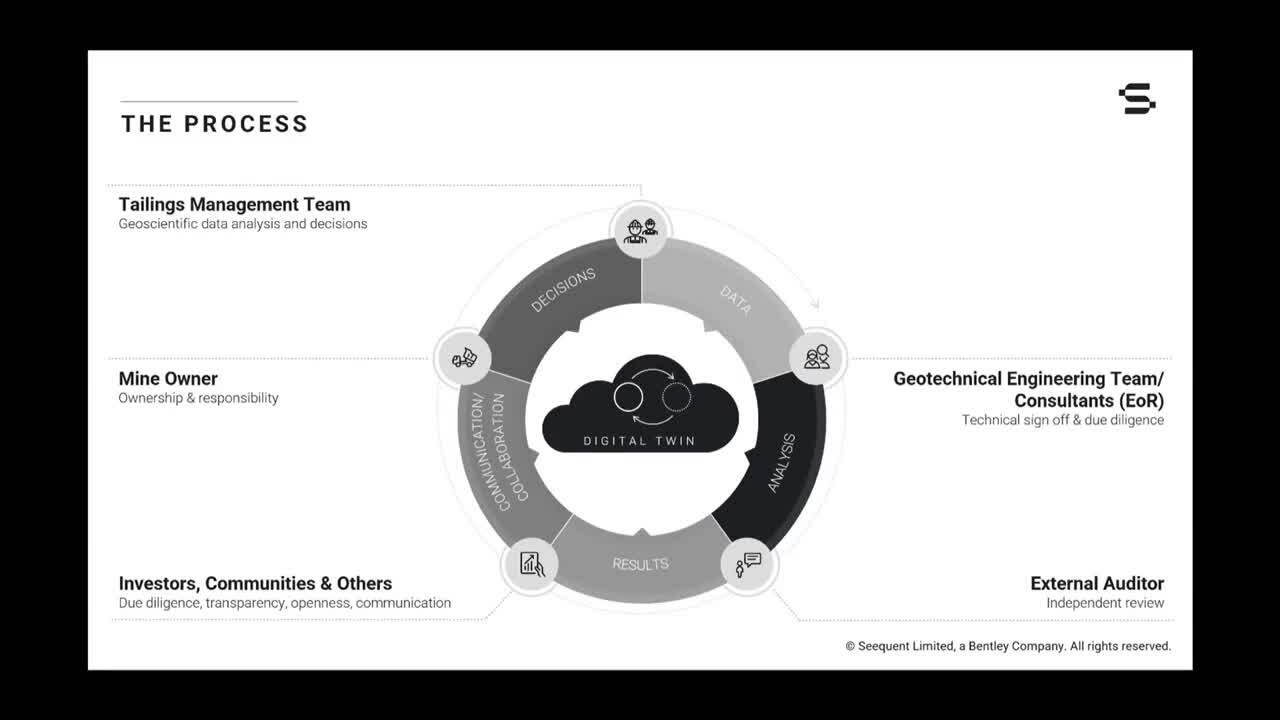

Now here’s the ideal case scenario

[00:03:51.350]

of an integrated workflow approach

[00:03:53.680]

whereby the accumulated and real-time knowledge,

[00:03:57.240]

3D monitoring and modeling,

[00:03:59.280]

and ultimately a digital twin drive TSF governance.

[00:04:04.390]

The idea is that a dynamic digital twin provides the basis

[00:04:07.880]

for a robust decision-making process to maintain

[00:04:11.160]

or adjust the site strategy regarding technical execution,

[00:04:15.810]

data acquisition, learning, emergency response,

[00:04:19.290]

as well as reporting and public outreach.

[00:04:21.740]

However, that is more easily said than done.

[00:04:26.453]

And so we have some challenges,

[00:04:28.430]

the analysis design and operation of the TSF is challenging

[00:04:33.290]

and there’s no doubt about that.

[00:04:35.130]

Both the design and our understanding

[00:04:37.370]

of the site are constantly evolving.

[00:04:39.753]

And the engineer of record is responsible

[00:04:42.350]

for detecting changes in the current

[00:04:44.500]

and future performance of the facility

[00:04:46.523]

through all phases of the life cycle.

[00:04:49.748]

Therefore targeted monitoring is essential,

[00:04:53.010]

but interpreting the data it’s challenging as well

[00:04:56.220]

and must be done in the context of the physical system.

[00:05:00.700]

What we would like to do today is to take you on a journey

[00:05:03.760]

and show you what modern tiers

[00:05:05.410]

of management could look like.

[00:05:07.600]

Rigorous monitoring through sensor data is

[00:05:09.910]

and remains a cornerstone and how we assess TSF.

[00:05:13.520]

It allows us to understand how the facilities behave today.

[00:05:17.090]

The element we wish to add to the current

[00:05:19.290]

and establish process is predicting.

[00:05:22.372]

That is the ability to detect a system change

[00:05:25.510]

through monitoring, correlate

[00:05:27.630]

and assess the information in a 3D context

[00:05:30.080]

with all other system information i.e the digital twin

[00:05:33.950]

and react in a preventative manner

[00:05:36.720]

to create safer TSF sites.

[00:05:40.100]

Of course, to allow for this process to unfold

[00:05:43.300]

and support an iterative continuation,

[00:05:46.830]

the digital twin needs to be flexible

[00:05:48.830]

and dynamic to incorporate change

[00:05:51.060]

at any stage of the life cycle.

[00:05:53.420]

The key ingredient here is not only integrated technology

[00:05:56.810]

and transparent data flow,

[00:05:58.800]

but that all entities involved and that is management

[00:06:02.110]

to technical engineers to consultants internal

[00:06:04.700]

and external reviewers are interconnected in real time.

[00:06:09.010]

All parties to be able to network and collaborate,

[00:06:11.880]

to support the perpetuation of the digital twin,

[00:06:14.900]

utilizing the latest data

[00:06:16.470]

and each other’s knowledge and expertise.

[00:06:19.550]

And now I hand the conversation to my colleague, Alex,

[00:06:22.150]

who will take us through the technical execution

[00:06:24.780]

of the dynamic digital twin workflow

[00:06:26.940]

as imagined by Sequent and Sensemetrics.

[00:06:30.100]

Alex.

[00:06:31.490]

<v Alex>Thank you very much.</v>

[00:06:33.060]

So the evergreen digital twin part

[00:06:36.030]

of my data provides a new set of tools

[00:06:38.880]

to meet new standards

[00:06:40.559]

for engineers with a technological leap

[00:06:44.450]

through the looking glass,

[00:06:45.800]

into the inner mechanics of critical assets,

[00:06:48.502]

such as tightened storage facilities.

[00:06:51.520]

It represents the next evolution of static 3D models,

[00:06:56.400]

dashboards, uncontrolled variables.

[00:06:59.010]

In the context of TSF,

[00:07:01.310]

this allows engineers to visualize

[00:07:03.230]

their assets track changes, simulate events,

[00:07:06.250]

and perform analysis to dynamically recalibrate.

[00:07:09.550]

And so doing it enables learning reasoning

[00:07:12.280]

and an overall better understanding of the asset itself.

[00:07:17.570]

What does it mean to sensorized the tones then practice?

[00:07:21.460]

Does that mean increasing dishonor count by five times,

[00:07:24.360]

maybe 10 times.

[00:07:25.881]

What about surface and subsurface defamation?

[00:07:29.910]

The increase density and diversity of sensor types makes

[00:07:33.810]

for an extremely complex landscape of sensing connectivity,

[00:07:38.070]

device control network and data management

[00:07:41.260]

before measurements can even be converted

[00:07:43.230]

into usable information.

[00:07:45.196]

IOT removes the multiple friction points,

[00:07:47.970]

inherited bringing all of this together.

[00:07:50.260]

It essentially increases efficiency and productivity

[00:07:54.000]

while at the same time,

[00:07:56.080]

enabling scalability and flexibility,

[00:07:58.530]

not across a single site, but across multiple sites.

[00:08:01.658]

IOT ultimately becomes the foundational piece

[00:08:05.330]

of the data science pyramid

[00:08:07.950]

and I myself I’m far from having any compliments

[00:08:11.320]

in this domain,

[00:08:12.410]

but I remember in high school being presented

[00:08:14.270]

with Maslow’s hierarchy of needs,

[00:08:17.180]

the best I can describe it as today is the different stages

[00:08:20.330]

that humans have to go through to find happiness.

[00:08:23.250]

The key thing to get out of this though,

[00:08:24.930]

is that you have to build them on the underlying layer

[00:08:28.960]

and in the cumulative way.

[00:08:30.360]

It needs lowered down hierarchy must first be satisfied

[00:08:33.660]

before individuals can attend to the needs higher up.

[00:08:37.210]

The same is true for the data science group.

[00:08:39.220]

You cannot get

[00:08:40.080]

to the artificially intelligent blockchain powered,

[00:08:44.640]

extended reality enter buzzword year stage

[00:08:49.110]

before being accomplished in underlying stage.

[00:08:52.170]

If you progress to the next stage too fast,

[00:08:54.610]

it’s not a solid permit.

[00:08:55.810]

And moreover to properly accomplish each of the stages,

[00:08:59.530]

you must use the awkward from the previous stage

[00:09:02.060]

as the input to the next step.

[00:09:04.370]

IOT has this unique capability

[00:09:06.660]

to build an extremely solid foundation on top of a broad

[00:09:09.524]

and diverse sensor base.

[00:09:11.670]

This is achieved through edge computing devices

[00:09:14.090]

that deliver itself provision sensor agnostic interface

[00:09:17.960]

through the ability of cloud computing

[00:09:20.080]

that enables a comprehensive system

[00:09:21.730]

of record powering an infinitely scalable

[00:09:25.478]

computational engine and all of this accessible

[00:09:28.730]

to the subsequent layer through an easy

[00:09:30.830]

to use Application Programming Interface or API.

[00:09:35.748]

And as these layers build on top of each other,

[00:09:38.670]

we evolve beyond data acquisition,

[00:09:41.414]

beyond understanding how facilities behave

[00:09:44.410]

as separate dashboard or static model to a state

[00:09:47.350]

where we are controlling our facilities behave

[00:09:49.158]

in the future through evergreen digital twins,

[00:09:53.210]

where we are equipping engineers

[00:09:54.750]

with that technological leap through the looking glass,

[00:09:57.720]

into the inner mechanics of these facilities,

[00:10:00.270]

enabling learning and reasoning on a whole new level

[00:10:03.315]

where we facilitate collaboration through the easy access

[00:10:07.590]

to contextually understood data derived

[00:10:10.060]

from the same source of truth,

[00:10:11.690]

to make decisions as a network of stakeholders

[00:10:14.250]

or as a team.

[00:10:17.590]

And then into greater workflow,

[00:10:19.610]

therefore instantly connects the site situation

[00:10:22.390]

with the analytical,

[00:10:24.030]

in essence, allowing all stakeholders or team members

[00:10:27.500]

to understand how facilities behavior,

[00:10:30.040]

and more importantly,

[00:10:31.150]

this provides them with two mechanisms

[00:10:33.050]

to control how facilities behave in the future.

[00:10:36.890]

The first digital twins have the ability

[00:10:39.870]

to improve predictive performance over time.

[00:10:42.500]

The improvements of the digital twin itself.

[00:10:44.930]

This can only be achieved

[00:10:45.920]

when real-time sensor data is tightly integrated

[00:10:48.370]

with tools such as 3D seepage models,

[00:10:50.730]

slope stability analysis,

[00:10:52.130]

and stress and strain calculations.

[00:10:54.710]

The second mechanism is through the ability

[00:10:56.860]

to inform the user when predicted performance will be poor.

[00:11:00.710]

This means identifying these regions of improvement

[00:11:03.470]

and offering a course of action

[00:11:04.900]

for improving its predictive capabilities

[00:11:06.937]

through updated engineering designs,

[00:11:09.310]

3D geological models and or 3D watertight.

[00:11:14.200]

And with that, I’m handing it back to my colleague, Janina.

[00:11:19.440]

<v Janina>Thanks Alex.</v>

[00:11:20.680]

Okay, now let’s have a look

[00:11:21.699]

at how Sequent modeling products pick up on the journey

[00:11:24.918]

after data acquisition

[00:11:26.960]

and make a dynamic digital twin come to life.

[00:11:31.020]

And here we see a dynamic 3D model of the local geology.

[00:11:34.200]

The services are implicitly generated in leapfrog.

[00:11:37.270]

The resulting geological model can be used

[00:11:39.530]

as a foundation for a hydrogeological grid model.

[00:11:42.640]

In addition, we can introduce your physical data

[00:11:44.740]

or other numeric point information such as sensor data

[00:11:48.100]

to build a full 3D interpolation model.

[00:11:50.810]

This information can be correlated to build a site model

[00:11:54.003]

that can then be actively shared in 3D

[00:11:56.860]

or via a 2D cross-section as shown here.

[00:11:59.690]

And you have, you have central.

[00:12:01.690]

The key aspect for collaboration is the maintenance

[00:12:04.090]

of a model and communication history,

[00:12:06.030]

central our cloud-based data management system,

[00:12:08.883]

where all active stakeholder isn’t active system,

[00:12:12.110]

where all act stakeholder

[00:12:13.090]

can access version controlled knowledge basis,

[00:12:16.090]

but only can they review and visualize video models live

[00:12:18.370]

on the web,

[00:12:19.203]

but actively exchange comments

[00:12:20.450]

and notify team men’s members in real time.

[00:12:23.040]

It is also outfitted with a version control data store

[00:12:25.640]

that allows for the easy exchange dynamic linking

[00:12:28.040]

of essential data files for subsequent analysis.

[00:12:31.160]

And here we have to use studio

[00:12:32.900]

where the initial 2D cross-sectoral from leapfrog is used

[00:12:35.125]

for geo technical analysis of the foundation responds

[00:12:38.490]

to construction loading,

[00:12:40.160]

ending with a graph

[00:12:41.240]

of the key performance indicator for construction.

[00:12:44.208]

The results of this analysis

[00:12:45.920]

can then reenter the iterative workflow,

[00:12:48.150]

actively informing essential target Rangers

[00:12:50.580]

for sensor data and monitoring.

[00:12:52.950]

And this is the proposed workflow.

[00:12:54.880]

And now I’d like to hand the conversation back to Ian

[00:12:57.150]

to conclude our presentation.

[00:12:58.810]

<v Iain>Thank you.</v>

[00:13:00.270]

So indeed thanks Janina.

[00:13:02.640]

So in summary,

[00:13:03.810]

when we assess what it takes to take the next step

[00:13:06.840]

in modern tailing storage facilities,

[00:13:08.650]

monitoring and management teams have

[00:13:11.340]

to consider the digital transformation of the process.

[00:13:15.160]

Monitoring data provides one essential cornerstone

[00:13:18.430]

for the development of a transparent

[00:13:20.540]

and dynamic digital twin,

[00:13:22.450]

which in turn becomes the basis for designs used

[00:13:25.970]

at all phases of the project’s life cycle.

[00:13:29.210]

Now this holistic approach invites the engineers

[00:13:31.830]

to participate in the investigation of the physical system

[00:13:34.990]

to understand the geological constraints

[00:13:37.400]

and make informed decisions

[00:13:39.070]

about the facility’s performance as it evolves.

[00:13:42.122]

A comprehensive digital twin

[00:13:44.667]

that consistently incorporates changing data

[00:13:48.030]

and evaluates all spatial numeric

[00:13:50.725]

and intellectual information

[00:13:52.410]

in a three-dimensional plus temporal context helps

[00:13:56.480]

to identify problems early.

[00:13:59.050]

It can also help develop

[00:14:00.280]

and adjust the targeted monitoring programs

[00:14:03.090]

and enables the operator to create an adaptable design

[00:14:06.560]

that allows changes to be identified in the moment

[00:14:09.510]

to accept the current construction trajectory

[00:14:12.120]

that meets the factors of safety required.

[00:14:15.160]

So the essential takeaway from our presentation

[00:14:18.640]

is that we want to convey a conviction,

[00:14:20.830]

that there is a paradigm shift occurring.

[00:14:23.730]

Tailings governance is able now to shift

[00:14:26.390]

from a predominantly reactive long-term modeling approach

[00:14:30.681]

to a more strongly agile predictive

[00:14:33.870]

short-term modeling method.

[00:14:36.123]

Preventing failure is not about a single data point

[00:14:39.180]

or technology,

[00:14:40.390]

rather it’s how you bring

[00:14:41.650]

the whole complex information set together that counts.

[00:14:45.460]

Thank you very much for the time

[00:14:46.980]

and of course we welcome further questions.