A step-by-step webinar to go through analysing EM data in Oasis montaj and 1D and 2.5D TDEM VOXI modelling of conductive anomalies.

Recent improvements in geophysical algorithms can pull more information out of new and historical electromagnetic datasets. Oasis montaj is a geoscience platform for processing, modelling, and sharing geophysical data with other stakeholders. In Oasis montaj 9.10, improvements to the VOXI TDEM workflow allow for ground and airborne electromagnetic surveys to be inverted into conductivity or resistivity models of the earth. This workflow shows how to use the EM Utilities extension to set up the data for modelling, calculate and remove EM array noise, calculate the Tau values and analyse EM arrays. The data is then brought into VOXI for geophysical modelling using the benefits of the simple VOXI workflow for setting up data space parameters. This data is shared with the geological team through new upload and notification tools in Seequent Central.

Overview

Speakers

Mark Lowe

Project Geophysicist – Seequent

Duration

39 min

See more on demand videos

VideosFind out more about Seequent's mining solution

Learn moreVideo Transcript

[00:00:00.850]

<v Mark>Hello, everyone.</v>

[00:00:01.852]

Welcome to the webinar today.

[00:00:04.070]

Let’s take a moment to allow everyone to log on

[00:00:05.037]

and get connected.

[00:00:44.974]

I’ll just Welcome everyone to the webinar today.

[00:00:47.650]

So, today we’re going to be using Oasis montaj

[00:00:52.850]

and looking at some EM data.

[00:00:56.590]

My name’s Mark Lowe, I’m a project geophysicist

[00:00:59.450]

in the Perth office for Seequent.

[00:01:02.990]

And I’m just going through the plan for this webinar.

[00:01:08.190]

Just before we do some housekeeping,

[00:01:10.170]

there is an option to add some comments

[00:01:12.950]

and chats in the link that’s there provided on the screen.

[00:01:19.180]

Definitely encourage people to use that

[00:01:22.230]

to type your questions in and send them through

[00:01:24.040]

and I’ll make endeavor to get back to you

[00:01:28.920]

soon after the webinar.

[00:01:32.930]

So today we’re going to be looking at a dataset.

[00:01:37.100]

It’s an airborne EM data set,

[00:01:38.460]

regional data set in Queensland.

[00:01:40.940]

We’re going to go through setting up the TDEM database,

[00:01:46.790]

looking at the EM utilities extension,

[00:01:50.150]

noise calculations, tau calculations,

[00:01:52.860]

viewing coincidence arrays and error envelopes,

[00:01:55.810]

and making some changes to those.

[00:01:58.100]

And also setting up

[00:01:58.980]

and running a unconstrained VOXI inversion

[00:02:02.230]

using the 1D TDEM EM VOXI tool.

[00:02:05.870]

The data for today is courtesy of GSI Australia.

[00:02:10.210]

If you were to search for the Mount Isa east VTM survey,

[00:02:16.440]

that’s the data set there’ll be looking at today.

[00:02:22.130]

Welcome to this webinar on the EM utilities extension,

[00:02:25.390]

and also Foxy TDEM inversion within Oasis montaj.

[00:02:31.110]

My name’s Mark Lowe,

[00:02:32.130]

I’m going to be presenting today,

[00:02:34.470]

looking at an airborne EM survey in Queensland Australia.

[00:02:39.410]

And we’re looking at modeling a conductive anomaly there.

[00:02:43.360]

Using the EM utilities extension

[00:02:46.650]

for QA QC and inversion setup,

[00:02:50.070]

and also for looking at the time constant calculation.

[00:02:53.410]

And then we’ll be prepping the data set

[00:02:56.400]

for inversion in VOXI and running an inversion

[00:03:00.290]

and having a look at the results

[00:03:01.460]

so that we can share them

[00:03:02.320]

to a geological modeling platform

[00:03:05.400]

or viewing those in the 3D viewer.

[00:03:07.910]

So to start off with today,

[00:03:09.057]

I’m just going to create a new project

[00:03:13.160]

and give it a name.

[00:03:19.200]

And I’m going to load the EM utilities extension

[00:03:22.270]

using the manage menus icon

[00:03:24.460]

in the project Explorer page up here.

[00:03:32.120]

So EM utilities is a tool with a couple of

[00:03:36.850]

it’s, a menu item with a couple

[00:03:37.860]

of different tools for setting up a database

[00:03:40.952]

for a time domain EM system.

[00:03:44.770]

So usually a airborne system,

[00:03:46.007]

but it could also be a ground-based system.

[00:03:50.380]

So setting up the time windows

[00:03:52.910]

and also doing noise calculations

[00:03:56.590]

and time constant calculations as well.

[00:03:59.809]

We’re going to bring in our database.

[00:04:04.520]

And this database I’ve gotten straight

[00:04:06.590]

from Geoscience Australia website courtesy of them.

[00:04:09.990]

It is a to B to T data set near Mount Isa.

[00:04:14.950]

And I’ve already selected a number of lines

[00:04:17.283]

that I’m interested in,

[00:04:19.280]

which are over centered over at a target,

[00:04:22.260]

which is actually on line 1940.

[00:04:24.580]

It’s a stratigraphic unit.

[00:04:25.860]

That’s a fairly conductive

[00:04:27.280]

and has some orientation information I’d like to model.

[00:04:31.180]

So I’m just going to firstly create a subset database

[00:04:34.720]

based on this database that they’ve provided.

[00:04:38.490]

And then we’ll just get it ready to invert.

[00:04:51.560]

The original database is quite large.

[00:04:53.180]

I think around three and a half gigabytes,

[00:04:56.590]

I’ll just make that small.

[00:04:59.210]

And we brought in the database now.

[00:05:02.940]

Oh, here it is.

[00:05:03.773]

Sorry we’re one.

[00:05:04.730]

And I’ve got all my lines selected.

[00:05:07.350]

And what I’d like to do, firstly,

[00:05:09.020]

is just use the coordinate systems tool.

[00:05:12.170]

So right click on my easting coordinate system.

[00:05:14.980]

And I’ll just set up the coordinate system.

[00:05:16.560]

So VOXI requires projected coordinates

[00:05:21.780]

for running the model.

[00:05:22.760]

So everything is run in a projective coordinate system.

[00:05:27.780]

So in this case, I’m going to convert,

[00:05:30.180]

it’s already actually in a projection.

[00:05:32.540]

It’s actually in GDA 94 MGA’s zone 54.

[00:05:35.546]

So I was going to assign that.

[00:05:37.282]

That’s one of my favorites anyway,

[00:05:39.170]

and bring that in.

[00:05:41.320]

So my X and Y has now been assigned.

[00:05:44.660]

They obviously have made us,

[00:05:45.730]

I might just remove some of the unnecessary channels,

[00:05:49.010]

hiding those pressing the space bar,

[00:05:51.300]

longitude and latitude I don’t need,

[00:05:54.160]

the height will be important.

[00:05:55.610]

So I might keep that channel there.

[00:05:57.270]

I Don’t need the laser and radar.

[00:06:00.020]

The current channel,

[00:06:03.270]

we will keep the now the power line monitor is also useful

[00:06:07.020]

because of the location of the power lines.

[00:06:09.210]

I might keep that for now,

[00:06:10.750]

some of these other channels that we don’t need,

[00:06:14.400]

and we’re just going to be inverting the vertical component

[00:06:16.790]

rather than the horizontal component today.

[00:06:19.020]

But obviously if you’d like VOXI is able to,

[00:06:23.710]

and the EM utilities tool are able

[00:06:26.040]

to run these same analysis

[00:06:27.880]

on the X component of the field as well.

[00:06:31.390]

I’m going to hide it for now

[00:06:33.484]

and I don’t need distance.

[00:06:35.240]

So we’ve got the vertical component here.

[00:06:37.750]

I’m just going to show the array

[00:06:41.680]

and I’m going to show it with a logarithmic stretch.

[00:06:46.950]

And I’ll just rescale that.

[00:06:49.500]

So here’s our survey data that’s been provided

[00:06:54.410]

and just cycle through lines here.

[00:06:58.880]

This section here is the anomaly

[00:07:01.640]

I’d like to look out a bit further.

[00:07:03.970]

If I bring up,

[00:07:05.350]

you can see there’s some noise and areas here,

[00:07:07.280]

actually bring up the power line monitor.

[00:07:11.510]

There is some high voltage power lines in the area

[00:07:15.480]

to be careful with.

[00:07:16.520]

So they obviously are affecting

[00:07:17.900]

the measured component there as well,

[00:07:20.320]

and quite a way either side of the line.

[00:07:23.520]

So I could produce a mass channel

[00:07:25.850]

for the power line monitor,

[00:07:27.060]

and then to make sure that that’s not included

[00:07:31.020]

when we’re doing some of these calculations.

[00:07:34.910]

So is going to create a mask

[00:07:35.950]

and I’ll just do a threshold

[00:07:38.260]

based on around about one standard deviation

[00:07:41.230]

of that power line monitor.

[00:07:46.280]

Sorry, just greater than three,

[00:07:47.390]

or make it easy to tell me syntax error.

[00:07:53.610]

Here we go.

[00:07:54.690]

Just a simple Boolean expression.

[00:07:57.260]

And we said it a little bit low.

[00:08:03.480]

I remove our information that we want to try and model.

[00:08:09.240]

There we go.

[00:08:12.310]

So play around with the mask

[00:08:13.300]

and this is just so that I’m not bringing in

[00:08:15.420]

any extra noise when we are doing

[00:08:17.690]

our noise calculations in here.

[00:08:20.560]

Another thing I can notice here is that

[00:08:22.360]

we’ve got some, some nulls in there as well.

[00:08:25.350]

It’s going to look at the array

[00:08:27.785]

and there is some nulls channels

[00:08:29.730]

in those early times that obviously

[00:08:31.090]

not recorded quite close to the off time.

[00:08:33.540]

And again, right at the end,

[00:08:36.830]

looks like it goes up to road 49 at last data 0.3 48.

[00:08:39.810]

So the last window is also not collected.

[00:08:42.550]

And I’ve actually brought in the window database straight

[00:08:45.990]

from the logistics report.

[00:08:48.420]

So I created a window data set here,

[00:08:52.750]

which shows the table of windows straight

[00:08:56.680]

from the logistics report.

[00:08:59.370]

And I’m going to use this

[00:09:01.300]

it has the index of each of those windows.

[00:09:05.120]

I’m going to use that to set up

[00:09:06.470]

our array-based properties for this data,

[00:09:08.770]

but firstly, I’m going to have to subset this.

[00:09:11.210]

So there’s no dummies in there as well.

[00:09:13.490]

So what I’ll do is I’ll just firstly, subset the data

[00:09:16.670]

by going to database tools,

[00:09:18.790]

array channels, subset array,

[00:09:22.467]

I’m going to input array

[00:09:26.480]

and output the other subset.

[00:09:29.830]

It’s going to be a to P to T,

[00:09:33.600]

start element will be the fourth element

[00:09:36.547]

and the end element will be the 48th.

[00:09:42.466]

And now our first value there is,

[00:09:46.740]

is the first recorded value as well.

[00:09:52.580]

Seems a little low.

[00:09:58.817]

I might just do a quick check just

[00:10:00.110]

to make sure I’m not removing any data.

[00:10:02.220]

First values should be 3.3.

[00:10:06.800]

Right it’s the same values.

[00:10:07.850]

So that’s good.

[00:10:08.683]

So just subset it to the first values.

[00:10:11.340]

And so I can remove that channel now,

[00:10:13.830]

I’ve replaced it with the DPT

[00:10:16.800]

and I’m going to set up these arrays

[00:10:19.050]

array-based properties by right clicking

[00:10:21.010]

and selecting array based properties.

[00:10:24.790]

In here I’m going to import from our window database

[00:10:27.590]

and this wasn’t provided, but I just, yeah,

[00:10:30.610]

I just copied and pasted those results in

[00:10:32.150]

from logistics report associated with that survey.

[00:10:36.030]

And you can see there’s a couple of different ways

[00:10:37.440]

we can set up these time windows.

[00:10:40.690]

Starting ends mid times and widths, for example,

[00:10:43.740]

and we’ll automatically pick up the,

[00:10:45.357]

the index offset as I’ve determined in here as well.

[00:10:50.390]

But because I’ve removed that offset,

[00:10:52.560]

I’m going to just bring that back to zero here.

[00:10:58.340]

So here we go, I’ve got my width.

[00:11:00.050]

They’re all increasing, which is, which is great.

[00:11:02.250]

That’s how it should be set up.

[00:11:04.370]

And it’s continuous all the way through start

[00:11:07.490]

and end times at all in there as well.

[00:11:10.040]

And there’s a few options for base as well,

[00:11:12.150]

but we’ve just brought in discreet time windows for each.

[00:11:17.510]

So if I just plot that again,

[00:11:22.230]

what we can do here is

[00:11:24.240]

we can also show selected array elements

[00:11:26.320]

rather than all of the array elements.

[00:11:27.990]

So this can be useful for looking at early times,

[00:11:31.760]

late times, every fifth channel, for example,

[00:11:35.850]

just for now, I’m going to show some late time channels,

[00:11:37.970]

the last 10 channels.

[00:11:39.860]

And you can just see it as another way

[00:11:41.010]

of sort of observing that, that data in there.

[00:11:44.010]

I can’t, I’ve got their logarithmic stretch.

[00:11:46.830]

I’ll apply that again

[00:11:50.910]

and just rescale.

[00:11:54.120]

So there’s that feature there, I was trying to model.

[00:11:57.290]

so EM utilities.

[00:11:59.530]

So we set up the data.

[00:12:01.280]

Now we can go ahead and calculate noise on this data set.

[00:12:05.410]

So usually what you want to do is

[00:12:07.600]

look for an area which is fairly resistive

[00:12:09.520]

to calculate noise on

[00:12:11.090]

as it will be a background response for the survey area.

[00:12:15.650]

So if I just go ahead and show all of those,

[00:12:21.770]

all of those array elements again.

[00:12:26.220]

Here it is

[00:12:27.053]

and I’ll just do the logarithmic stretch.

[00:12:38.380]

And rescale.

[00:12:40.780]

so we looking for areas which are fairly continuous,

[00:12:44.360]

kind of short, pretty average sort of background response.

[00:12:52.660]

So in here, for example,

[00:12:53.570]

is that there’s a bit of a late time

[00:12:55.170]

sort of all gone all the way through an anomaly in there,

[00:13:00.530]

but let’s try another line.

[00:13:04.390]

What, for example, in here

[00:13:06.040]

it’s pretty flat overall,

[00:13:09.060]

does bounce around a little,

[00:13:10.190]

but there’s not this serious noise in there.

[00:13:13.130]

It’s away from that those spikes

[00:13:17.400]

that obviously the power lines,

[00:13:18.990]

and we can use that to calculate our noise.

[00:13:24.063]

So when you going to do corrections,

[00:13:25.630]

calculate noise input that BDT,

[00:13:29.890]

we could select the mask.

[00:13:32.120]

I won’t worry for now.

[00:13:33.470]

And I can select those marked rows as well.

[00:13:40.740]

And you can see I could calculate

[00:13:42.160]

based on those marked rows,

[00:13:43.460]

I could calculate based on the displayed line,

[00:13:46.670]

which will bring in the entire line,

[00:13:49.630]

selected lines, all lines for example.

[00:13:52.950]

But now I want to do it on the mark rows.

[00:13:54.190]

You can see the values get quite low

[00:13:55.810]

and bounce around zero.

[00:13:56.950]

In this case, I estimate the noise because of that.

[00:14:02.550]

Some of those mid-dish to late times

[00:14:05.100]

were bouncing around sort of after that

[00:14:06.670]

to 0.01 picovolts present mean for value.

[00:14:11.229]

I set that as a noise floor.

[00:14:14.110]

So it’s on from about channel 20 onwards.

[00:14:17.830]

Press okay and talking about units,

[00:14:23.080]

that’s something we have to set as well.

[00:14:24.380]

It’s going to edit this channel,

[00:14:26.600]

make sure that the units are applied in here.

[00:14:29.120]

So I’m going to grab these directly

[00:14:32.300]

from the logistics report actually.

[00:14:35.180]

And you can see that the SFZ channel, there it is.

[00:14:41.070]

I use a selection tool that is the units there,

[00:14:45.173]

which you want to use.

[00:14:51.130]

Could this be time data?

[00:14:52.200]

Press okay.

[00:14:54.131]

So we’ve calculated the noise.

[00:14:55.940]

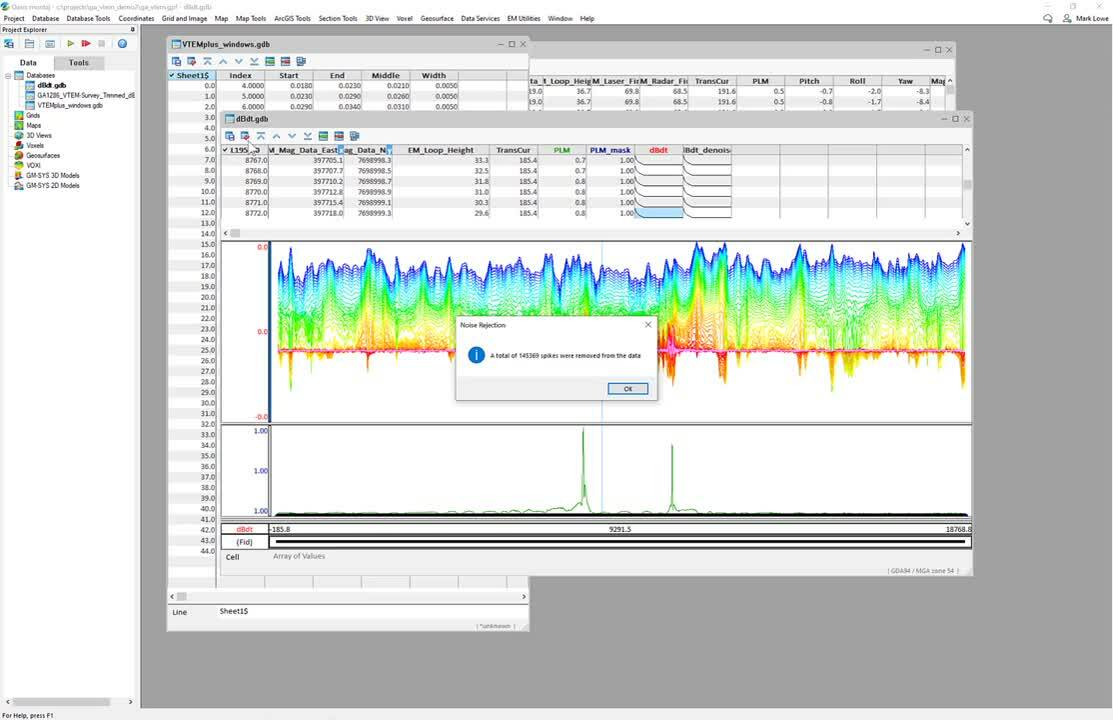

Now we’re going to create a denoised data set.

[00:14:59.040]

So reject noise,

[00:15:01.600]

the output is going to be our DB DT denoise,

[00:15:07.150]

lines will be all lines selected lines is the same.

[00:15:11.270]

And you can see, I can,

[00:15:12.103]

I could also put a global multiplier in there as well,

[00:15:15.170]

sort of a factor that we could apply.

[00:15:20.250]

As we look at the results after we apply this noise,

[00:15:23.590]

we don’t want to be getting rid of real data.

[00:15:25.510]

We don’t want it to be underestimating the noise as well.

[00:15:28.820]

For now, I’m quite happy with that.

[00:15:31.710]

I’ve selected that range to do it on.

[00:15:34.080]

And the benefit of having a previous look at it as well,

[00:15:39.854]

a total of 145,000 spikes removed from the data.

[00:15:43.360]

So overall, it’s not, not heaps in this set of lines.

[00:15:47.280]

So we’ve got about 45 data points per row,

[00:15:49.960]

and there’s tens of thousands of rows per section of lines.

[00:15:53.430]

So it’s not, not a huge amount.

[00:15:55.970]

And we’ll see if we can see some of the effects on there.

[00:16:00.880]

What I might do is,

[00:16:01.713]

I’ll just show the de-noise channel as well,

[00:16:05.200]

which look pretty similar to the above.

[00:16:16.270]

And if I just bring that down a little bit,

[00:16:20.538]

make this view a little larger.

[00:16:25.210]

We could, we find it difficult to see much changes in there.

[00:16:30.840]

I’ll go back to that line of sort of interest in there.

[00:16:34.240]

So what’s another way that we could observe this data

[00:16:38.120]

rather than these array profiles.

[00:16:40.920]

So in EM utilities there’s another tool we can use,

[00:16:43.370]

if I just select an area of interest,

[00:16:45.210]

which I’m going to do it near sort

[00:16:48.170]

of a gradient changing area,

[00:16:51.350]

sort of in here,

[00:16:54.410]

see if there’s any effects on any of these array elements.

[00:16:58.070]

If you go to EM utilities, interpretation, view,

[00:17:01.340]

coincidence arrays,

[00:17:03.940]

this is a really powerful tool

[00:17:05.340]

because it allows you to show the onboard noise

[00:17:09.150]

that you’ve just calculated

[00:17:11.380]

and also show comparison to your de-noise channel.

[00:17:15.680]

And if I just change the scaling

[00:17:20.090]

and I’ll put it out that noise for as well,

[00:17:28.730]

we could see the effect on here

[00:17:30.890]

and also move this around

[00:17:33.110]

to an area of interest.

[00:17:35.360]

So I’m going to get back to that,

[00:17:38.540]

that fit that I was interested in

[00:17:41.880]

sort of close to that anomaly there.

[00:17:44.200]

I just want to see if there’s any, ah, there we go.

[00:17:45.863]

There’s a green value in there.

[00:17:48.570]

So this was changed from .102, to .115.

[00:17:52.830]

So it was obviously slightly noisy,

[00:17:55.560]

slightly outside of that standard deviation

[00:17:57.400]

or that noise floor in this case,

[00:17:58.790]

I think I had 0.01.

[00:18:00.650]

And so it has, has fitted that point slightly.

[00:18:04.210]

So those are the sorts of things,

[00:18:05.760]

we can test and see if it’s doing a good job.

[00:18:08.780]

Oh, there was another one.

[00:18:09.613]

So in here we’ve got a bit of noise

[00:18:14.560]

and it’s fitted that point as well.

[00:18:18.820]

So if I scale these to, we’ll get to speak to that scale.

[00:18:25.310]

Of course, scale to all channels,

[00:18:29.910]

put a fixed range on this so that it stays consistent,

[00:18:34.980]

lots of tools we can use in this view, coincidence arrays.

[00:18:38.820]

And of course it’s all data tracked as well.

[00:18:41.460]

We can see that location.

[00:18:42.640]

If we have a map view,

[00:18:43.660]

we can see where that,

[00:18:44.493]

that represents that on a map as well.

[00:18:47.830]

So I’m going to use that again later on.

[00:18:50.000]

For now we’re going to do a time calculation.

[00:18:53.050]

So just go down to EM utilities interpretation,

[00:18:55.840]

tau calculation,

[00:18:57.890]

and select our de noised calculated data

[00:19:02.380]

or our TBT,

[00:19:04.750]

either one set the lines we want to run it on.

[00:19:08.130]

So maybe just the selected line

[00:19:10.700]

and it’s going to create a fit tower,

[00:19:12.650]

which will be the maximum tower

[00:19:15.260]

from those the windows that we set

[00:19:18.690]

on this case can be a five point moving average

[00:19:20.560]

down the array,

[00:19:22.790]

the minimum and maximum values obviously set

[00:19:24.840]

from the base frequency.

[00:19:26.620]

I think this was the 25 Hertz.

[00:19:28.200]

So 20 milliseconds sounds about right.

[00:19:31.080]

And we can set those parameters

[00:19:34.480]

to use particular channels or not.

[00:19:36.700]

It does get noisy towards the end.

[00:19:38.250]

I could switch those off as well.

[00:19:39.920]

There’s quite a lot of settings

[00:19:41.470]

you could apply in here as well.

[00:19:43.970]

So let’s go and press okay for now

[00:19:46.220]

and to calculate what I just did on one line,

[00:19:54.400]

that’s okay.

[00:19:55.233]

While that’s running.

[00:19:56.066]

So, so time constants or tau values,

[00:19:59.370]

obviously you could be used

[00:20:00.830]

as an interpretation tool in themselves

[00:20:02.720]

that could be gritted up that maximum tau value.

[00:20:05.616]

You could have shown it on a profile and look for peaks.

[00:20:10.610]

It’s definitely another a tool there

[00:20:12.960]

that can be used to see,

[00:20:14.880]

the characteristics of conductors.

[00:20:25.150]

I can show that value

[00:20:26.980]

and obviously there’s a bit of noise in there.

[00:20:29.560]

And then we can say that there is a peak

[00:20:32.130]

with a particular peak value as well.

[00:20:34.103]

4.9, for example, milliseconds.

[00:20:37.963]

All right, so that’s some of the tools

[00:20:41.550]

in EM utilities apart from that

[00:20:43.770]

is Foxy TDEM.

[00:20:45.620]

So let’s go ahead We’ll do a model

[00:20:48.200]

of some of these lines as well

[00:20:50.390]

and do an inversion.

[00:20:52.540]

So firstly,

[00:20:53.373]

I’ll just create a map showing these line locations,

[00:20:56.080]

map new, scan the data,

[00:20:58.833]

next, I’ll just call it a base map

[00:21:02.900]

and just to show that line path as well,

[00:21:06.830]

don’t need compass direction.

[00:21:09.010]

Okay, here we go.

[00:21:10.260]

It’s got to line path on there.

[00:21:12.160]

And obviously we could show the power line meter

[00:21:16.380]

it would show where the power lines are.

[00:21:18.710]

We could create the time channel for example,

[00:21:21.400]

lots of other things we could do to try

[00:21:22.690]

and highlight that anomaly.

[00:21:24.150]

I’ve already created an a polygon,

[00:21:27.340]

which was from create a polygon fall.

[00:21:30.240]

I’m going to draw that polygon.

[00:21:31.940]

The reason why I created this,

[00:21:33.890]

was so that it’s, it makes it quite easy

[00:21:36.680]

to set up the model in VOXI as well,

[00:21:38.900]

over an area of interest.

[00:21:40.070]

So actually had an area here

[00:21:42.680]

that I’d like to model further.

[00:21:44.300]

It’s centered over that

[00:21:46.400]

that conducted body I showed before

[00:21:49.180]

and the time windows.

[00:21:50.460]

So also the next thing that you will need,

[00:21:54.168]

you need three main things for VOXI inversion.

[00:21:57.300]

That’s that polygon, it’s the database or a grid

[00:22:01.040]

in this case will be a database for EM data

[00:22:05.070]

having all of that array data.

[00:22:07.250]

And you’re also going to need the topography.

[00:22:09.830]

So I’ve just grabbed some topography data

[00:22:11.630]

from data services seeker

[00:22:13.270]

does the SRTM 30 meter typography,

[00:22:16.220]

which is fine for the resolution of this area.

[00:22:18.560]

There is, this is a regional expiration information surveys,

[00:22:24.240]

two kilometers between each of these survey lines.

[00:22:27.720]

So the SRTM topography will be fine.

[00:22:32.020]

Obviously I can display that on here.

[00:22:35.612]

There it is,

[00:22:38.020]

and told us to put it topography stretch on there too.

[00:22:43.490]

So that, that was just covering that,

[00:22:45.830]

that area of interest there as well.

[00:22:47.137]

And there’s my topography and my polygon.

[00:22:50.500]

So I’m going to go ahead

[00:22:51.590]

and create a new VOXI from polygon.

[00:22:55.150]

I’ll call it a VTM one D domain, inversion.

[00:23:02.600]

The polygon I had was area of interest

[00:23:06.200]

and the typography is the SRTM.

[00:23:09.980]

So it’s just going to discretize that up

[00:23:11.740]

into a sort of a general resolution

[00:23:14.070]

based on the size of the area.

[00:23:17.300]

Make sure I select one D TEM

[00:23:20.488]

and I’ll boost up the resolution

[00:23:21.830]

just to make sure it’s below the

[00:23:23.000]

250 by 250 subscription size cutoff as well.

[00:23:27.940]

And press okay.

[00:23:34.520]

So what this will do,

[00:23:35.353]

it’s going to create a mesh

[00:23:36.310]

based on those dimensions will input the topography,

[00:23:39.763]

do a Cartesian cut to the top surface of that

[00:23:43.060]

with the top cells.

[00:23:45.150]

And then it’s going to do the next thing

[00:23:47.920]

apart from trimming it to the area

[00:23:49.580]

is going to ask for the ad data with it.

[00:23:51.820]

So this is where we can go through three simple steps

[00:23:54.910]

to bring in that EM data.

[00:23:56.670]

So I’m going to go, yes.

[00:23:58.680]

And we’ll go ahead and bring in the data.

[00:24:02.760]

Okay so lets notice that I haven’t saved it

[00:24:04.540]

since I’ve done run some changes.

[00:24:06.500]

So I just want to make sure that

[00:24:09.712]

those changes are all saved.

[00:24:13.610]

So yes, and it’s going to automatically

[00:24:17.390]

bring in the Eastern and Northern channels.

[00:24:19.350]

And with the elevation,

[00:24:21.640]

you have a couple of options,

[00:24:22.810]

either the elevation itself or a terrain clearance,

[00:24:26.270]

which we have the EM loop heights.

[00:24:28.330]

I’m going to use that as terrain clearance here.

[00:24:31.418]

The optimized sampling we want to make sure is,

[00:24:34.020]

is set to speed up our calculations here

[00:24:36.860]

’cause obviously the along along line distance

[00:24:41.290]

at the base frequency it’s going

[00:24:43.440]

to be quite quite close between each several point,

[00:24:46.590]

maybe 10 or so meters.

[00:24:48.200]

And I’m going to be using more around

[00:24:50.120]

a 50 to a 100 meter cell size here.

[00:24:52.210]

So I want to optimize that to speed up that inversion.

[00:24:56.980]

One sample per cell is quite enough,

[00:24:59.160]

it’s conductivity model.

[00:25:01.560]

The top of data it is a VTM system.

[00:25:03.460]

I had to change the configuration slightly for this one.

[00:25:06.270]

It is a long pulse,

[00:25:07.610]

and I’ll just put a little subscript GA on there.

[00:25:09.810]

The way I did that is I just set up

[00:25:11.230]

a new configuration in here.

[00:25:13.300]

I wrote it in and put in the parameters

[00:25:14.970]

for transmitter and receiver

[00:25:16.650]

and the wave form and everything in there.

[00:25:21.690]

The channel is Martinez channel

[00:25:24.560]

can see there is options for the X component.

[00:25:27.120]

I’m just going to do the vertical for now,

[00:25:29.200]

and I’ve actually calculated the noise.

[00:25:33.440]

So I’m going to use that here.

[00:25:36.600]

So that calculated noise is actually attached

[00:25:39.190]

to that channel now,

[00:25:41.300]

and we can load that from the EM channel.

[00:25:43.400]

And again, it’s going to ask that same error multiplier.

[00:25:46.410]

Which I said as one before, so I’m happy with that.

[00:25:48.720]

I’m going to stay with just no error multiplier applied.

[00:25:53.680]

Press next, some parameters around what’s going to import,

[00:25:57.600]

which all look good, start time, end time,

[00:25:59.890]

et cetera finish that.

[00:26:01.900]

And it’s going to go ahead

[00:26:02.733]

and bring in that data set,

[00:26:04.400]

sub-sample it to the mesh size

[00:26:07.260]

and create a new subset database

[00:26:09.280]

with the error information,

[00:26:10.900]

as well as the data information.

[00:26:14.490]

So it’s created a new database discritized on that,

[00:26:19.970]

the dimensions that we’ve input here.

[00:26:23.138]

Would you like to add the way form? Yes.

[00:26:25.440]

So now we want to go ahead

[00:26:26.500]

and add the wave form information,

[00:26:29.240]

and that’s going to come from a database too.

[00:26:30.900]

So Geoscience Australia actually provide

[00:26:34.530]

a wave form with this data set on their website,

[00:26:37.450]

which was fantastic.

[00:26:38.300]

And it was in a soft GDB format as well.

[00:26:41.460]

So it was called sort of grab it.

[00:26:47.717]

What’s a cited, a different database.

[00:26:50.180]

There it is, waveforms press okay.

[00:26:53.860]

And that was straight from the website actually.

[00:26:58.320]

And you can see that it’s brought in the time

[00:27:00.280]

and current channels from there as well,

[00:27:01.620]

which is, which is fantastic.

[00:27:03.130]

You can obviously make this waveform

[00:27:05.270]

from a logistics report

[00:27:07.450]

or from the contractor as well.

[00:27:10.820]

And you can see once that’s important comes

[00:27:12.780]

into this new window,

[00:27:14.540]

which shows the current build up

[00:27:19.900]

and off time and as well,

[00:27:22.600]

and the length of that,

[00:27:23.670]

and the window channels all brought in here as well,

[00:27:25.633]

as well as that error database

[00:27:27.690]

that we set up with EM utilities.

[00:27:29.990]

And if we just press the calculate here,

[00:27:32.590]

it’s going to just zoom to that Off-time cutoff

[00:27:36.360]

and then offset based on the start time

[00:27:38.746]

for those first windows

[00:27:40.350]

and continuing windows after there.

[00:27:42.280]

And you can see where each of those windows are.

[00:27:44.970]

And for example, that last window wasn’t being measured.

[00:27:47.330]

So that’s fine.

[00:27:50.130]

So I’m going to press okay.

[00:27:52.183]

So I brought in a waveform.

[00:27:53.560]

I’ve set my channel windows.

[00:27:56.540]

This dataset is, could be ready to invert already,

[00:28:00.470]

but I’m just going to set up my mesh a little differently.

[00:28:03.280]

And,

[00:28:04.600]

and this is actually going to then go back

[00:28:07.570]

to the original database and re-sample it

[00:28:09.860]

based on my new mesh parameters.

[00:28:12.170]

So if I go right click modify, because of,

[00:28:16.730]

I want to try and retain as much

[00:28:18.120]

a long line resolution as possible.

[00:28:20.400]

I’m going to increase this to 50

[00:28:22.700]

and perpendicular to the line direction.

[00:28:26.260]

There’s not much information of course, offline.

[00:28:28.850]

So I could, I could break that down to a larger size,

[00:28:32.470]

much, maybe around 300 off those lines.

[00:28:37.170]

And as much as I can,

[00:28:38.080]

four meters maximum resolution

[00:28:40.050]

in the vertical direction is quite good as well.

[00:28:43.490]

And you could set advanced properties,

[00:28:45.220]

if you’d like to as well here.

[00:28:47.816]

I’ll go ahead and press okay.

[00:28:50.230]

So update my mesh, it’ll create a new database,

[00:28:53.240]

re-sample that to that new database.

[00:28:56.160]

It does a lot of things in the backend here

[00:28:57.660]

that you don’t have to worry about.

[00:28:59.590]

And but you can look further into these

[00:29:03.050]

and I’ll show you how to do that too,

[00:29:04.350]

cause that can be quite, quite worthwhile.

[00:29:06.810]

So there we go.

[00:29:07.750]

It’s re sampled to that larger mesh.

[00:29:14.260]

We could right click on the data source

[00:29:16.550]

and press view.

[00:29:17.450]

And if you do this,

[00:29:18.340]

it’ll bring out that new sub sample mesh.

[00:29:20.820]

And the reason why I’d like to view this,

[00:29:22.930]

is to make sure that the data I’m inverting

[00:29:25.219]

actually has the anomaly information in it,

[00:29:28.190]

that we want to try and target here.

[00:29:30.840]

So here’s the de-noise channel I’ve imported.

[00:29:34.180]

If I show that just the late times.

[00:29:38.760]

Yeah, there it is.

[00:29:39.690]

That’s great and then if I show my error,

[00:29:45.840]

so this should only show a value of 0.01

[00:29:48.670]

in those late times anyway.

[00:30:00.770]

I can see where that cut off is going to be.

[00:30:04.160]

And obviously it’s going to cut off most of this noise here.

[00:30:06.400]

If it’s below the noise threshold,

[00:30:08.240]

there’s a little bit of geology over here

[00:30:09.610]

it’s going to target

[00:30:10.443]

and this anomalies definitely is going

[00:30:11.670]

to be modeled in the late times.

[00:30:13.020]

So that’s fantastic.

[00:30:13.853]

That’s what I wanted to confirm there.

[00:30:16.930]

So that can be helpful just to check as well.

[00:30:19.040]

It’s going to go ahead and run this, this model.

[00:30:22.316]

There is constraints we could set up.

[00:30:24.140]

I don’t have much information at the moment

[00:30:26.390]

about what I expect to see

[00:30:28.210]

if I had a background conductivity,

[00:30:31.020]

I could set that in here.

[00:30:32.490]

I could set a voxel if I’d already run an inversion

[00:30:34.970]

and would like to constrain it further

[00:30:36.300]

based on that, or, sorry,

[00:30:37.450]

start off the model from most parameters

[00:30:39.660]

to speed up the inversion.

[00:30:43.050]

If I had a value of the background conductivity,

[00:30:46.430]

for example, if it was around 20 million meters,

[00:30:49.760]

I could have set that as a constant value here as well.

[00:30:54.310]

For now I’m just going to run with the default,

[00:30:56.930]

which is going to start from a zero background.

[00:31:01.560]

And from there, I just go to run the inversion.

[00:31:06.620]

So this will set it up.

[00:31:07.550]

It will send it to the Microsoft Azure cloud.

[00:31:10.160]

It’ll break it all down into a binary format,

[00:31:14.120]

which is, has no coordinate information in it.

[00:31:17.640]

It’s very secure.

[00:31:18.910]

It’s obviously running on Microsoft Azure platform

[00:31:22.070]

and about apart from the security aspects,

[00:31:27.310]

it’s the speed that really is a lot of the benefit here.

[00:31:30.000]

So we can run this inversion.

[00:31:31.260]

It should take around half an hour.

[00:31:33.130]

So I might just switch off for a moment

[00:31:34.940]

and I’ll save you waiting around

[00:31:37.581]

and, we’ll come back, I’ll come back on

[00:31:40.310]

when that inversion is complete,

[00:31:41.690]

but you’ll see that this is going

[00:31:45.220]

to speed up that process,

[00:31:47.700]

and then produce a model here

[00:31:50.070]

that we can have a little bit

[00:31:52.220]

of a further investigation of.

[00:31:55.180]

All right, just let that run

[00:31:56.460]

actually not as long as I thought so,

[00:31:58.680]

it took about 26 minutes to run that inversion

[00:32:04.560]

and we’ve got our results in here.

[00:32:08.010]

So we’ll have a closer look at those

[00:32:10.070]

let’s turn off the mesh.

[00:32:19.310]

So, so is it done?

[00:32:25.760]

So we’ve got a, I’ve got a conductivity model here.

[00:32:28.870]

We’ve got a predicted response.

[00:32:30.830]

We can look at it as well

[00:32:32.160]

and see how it fits to our observed data.

[00:32:34.330]

We could obviously clip through

[00:32:37.620]

the result we got here as well.

[00:32:39.620]

See if we can observe the anomaly in there,

[00:32:41.970]

which we can.

[00:32:43.920]

Not bring in as much in the deeper areas,

[00:32:48.720]

as I thought, but it’s okay.

[00:32:51.330]

We just needed a little bit more

[00:32:52.410]

of a fine tuning let’s set up.

[00:32:57.630]

Okay.

[00:33:00.266]

And that’s just the first pass.

[00:33:02.420]

All right.

[00:33:03.253]

So why don’t we have a look at the predicted response?

[00:33:05.450]

I think this is really worthwhile to start off with,

[00:33:09.380]

and as we know inversion,

[00:33:11.710]

it’s an iterative process.

[00:33:12.960]

This was just one go,

[00:33:13.870]

we can run it again and try and find you in that.

[00:33:19.130]

Let’s see if we can tighten up that result as well.

[00:33:23.920]

So you can see when I,

[00:33:24.840]

I clicked to look at the predicted response,

[00:33:27.850]

it’s going to show the original observed data.

[00:33:34.370]

So for example, I can show those late time elements again.

[00:33:39.840]

I could cycle through to that line lines of interest,

[00:33:43.650]

and I can also show the final

[00:33:46.010]

or various iterations as well.

[00:33:54.200]

And if I just show that with the same scale

[00:33:58.940]

and can see how well it fits now,

[00:34:00.180]

it can be still a bit tricky in there

[00:34:01.610]

to observe what it looks like for each row.

[00:34:04.330]

So we can still use the EM utilities,

[00:34:06.770]

interpretation view Quinson and raise here as well.

[00:34:10.040]

And if I do that, then I can show my observed data.

[00:34:13.270]

I could show my error channel,

[00:34:17.360]

and I’ll just show the display

[00:34:19.010]

the second channel as the error

[00:34:20.860]

and show my final inversion and see how they compare.

[00:34:26.960]

And if I just moved down to where that anomaly is,

[00:34:34.240]

again we can see how well it fits.

[00:34:37.290]

And we could probably tell more,

[00:34:41.410]

we can see that there is still a bit,

[00:34:43.210]

that’s not fitting so well here.

[00:34:46.224]

It might be that can change those model parameters,

[00:34:48.340]

or I could check where those nodes are sitting

[00:34:50.430]

in comparison as well to the mesh size.

[00:34:55.460]

Those could be some mesh parameters.

[00:34:56.660]

we can do a bit of a fix up.

[00:34:59.656]

We can cycle through the other line as well,

[00:35:02.730]

where we can see that late anomaly there,

[00:35:07.520]

see how well it fits.

[00:35:09.230]

We see focusing on some of these light channels

[00:35:10.857]

and not too bad, early time, they wasn’t fitting so well.

[00:35:13.870]

And a bit later on on these edges

[00:35:15.243]

is not the best fit as well.

[00:35:17.080]

And we can see that in the result,

[00:35:20.620]

it’s a bit more vertical than what we expect,

[00:35:23.650]

but it’s a bit of a first pass.

[00:35:25.960]

What we can do here is you can right click

[00:35:29.040]

and choose display in 3D view.

[00:35:31.460]

And if I create and plot sections along lines,

[00:35:33.620]

these will produce GSF section grids,

[00:35:36.270]

which could also be then copied

[00:35:38.350]

and pasted into a Leapfrog go

[00:35:42.350]

with the right information, the right location as well.

[00:35:45.792]

So this is the latest version of Leapfrog.

[00:35:48.050]

The June release Leapfrog 2021.1.2

[00:35:52.450]

does allow importing GSF crooked section grids as well.

[00:35:56.956]

ISO surfaces we can generate,

[00:35:59.770]

on this one I might might leave that for now,

[00:36:02.700]

but you know, once we fix up

[00:36:04.440]

that inversion a little bit more,

[00:36:05.930]

when they’re doing that iterative process,

[00:36:07.950]

rerunning it again, it might be worthwhile,

[00:36:11.690]

probably would be worthwhile producing some ISO surfaces

[00:36:15.240]

or particular connectivity valleys, for example.

[00:36:19.226]

I might just turn off some of these layers

[00:36:20.830]

just to show those section grids,

[00:36:23.210]

turn off the voxel for now as well,

[00:36:25.230]

and turn off the surface.

[00:36:28.140]

And you can see some of those section grids

[00:36:29.880]

in there as well.

[00:36:33.160]

And obviously we could do some further modeling

[00:36:37.320]

and do some ISO services of that as well,

[00:36:40.300]

but that’s just a rundown on using those tools.

[00:36:43.080]

Of course the next thing I’d do,

[00:36:43.913]

I’d go back to the model

[00:36:46.370]

and see if we can fix this up a little bit further.

[00:36:49.350]

I’m not a 100% happy with how that turned out.

[00:36:53.760]

It’s always one of those things

[00:36:54.600]

when you’re doing the webinar

[00:36:55.810]

and it worked better the day before,

[00:36:58.080]

but that’s okay.

[00:37:00.240]

So it’s probably a matter of playing around

[00:37:02.520]

with those mesh parameters,

[00:37:03.480]

playing around with those noise parameters again.

[00:37:06.200]

Trying to fit that noise possibly a little bit better

[00:37:11.860]

and tidying up those mesh constraints

[00:37:16.440]

and rerunning that model again,

[00:37:18.290]

until it makes a bit more geological sense in there as well.

[00:37:23.610]

But I hope that gives you a good rundown,

[00:37:25.270]

of the tools and using those tools.

[00:37:27.550]

And other things we can do obviously is,

[00:37:30.450]

is play around with some of these

[00:37:32.090]

model building parameters in there.

[00:37:34.090]

Also, I didn’t quite go through those,

[00:37:35.740]

but there is some settings we want

[00:37:38.540]

to run that inversion again.

[00:37:39.780]

We could set that as a starting model, for example,

[00:37:43.990]

just to quickly show that, to right click modify,

[00:37:47.770]

set that final inversion result actually

[00:37:50.550]

as our starting model.

[00:37:52.160]

And we could use that then to,

[00:37:56.070]

to run the model again

[00:37:57.060]

and try and refine this a little bit further

[00:37:58.600]

rather than having to start

[00:37:59.530]

from the starting point.

[00:38:00.590]

So that could be another first step as well,

[00:38:02.200]

even if we actually resize the model mesh,

[00:38:03.980]

we can still run that.

[00:38:05.360]

So there’s a few steps to look at there,

[00:38:09.360]

as I’m sure everyone knows inversion

[00:38:10.780]

is an iterative process.

[00:38:12.580]

It’s a matter of, you know,

[00:38:14.260]

playing around with these constraints,

[00:38:15.370]

trying a few different models,

[00:38:17.210]

but the power of, you know, these tools

[00:38:19.860]

really is that it gives you in the EM utilities

[00:38:22.320]

more options to view those results.

[00:38:25.200]

And to recalculate that noise

[00:38:27.990]

based on sort of what you see as fitting

[00:38:30.430]

to the geology and the system parameters that are there,

[00:38:35.400]

kind of go through how to,

[00:38:36.930]

we went through how to set up the system in VOXI as well,

[00:38:41.330]

and ready for inversion.

[00:38:43.050]

And then obviously running that on the cloud,

[00:38:46.130]

means we can speed this up.

[00:38:47.520]

So that was 25 minutes to run that inversion,

[00:38:49.610]

we can run it again, get a better result

[00:38:52.560]

and just continue that process as well,

[00:38:58.280]

and get straight into the geological modeling

[00:39:01.360]

and adding value using that chip,

[00:39:03.590]

that geophysics data as well.

[00:39:05.370]

So hope everyone enjoyed that webinar today.